🎯 Newsletters

Machine Learning Mastery

9 min read

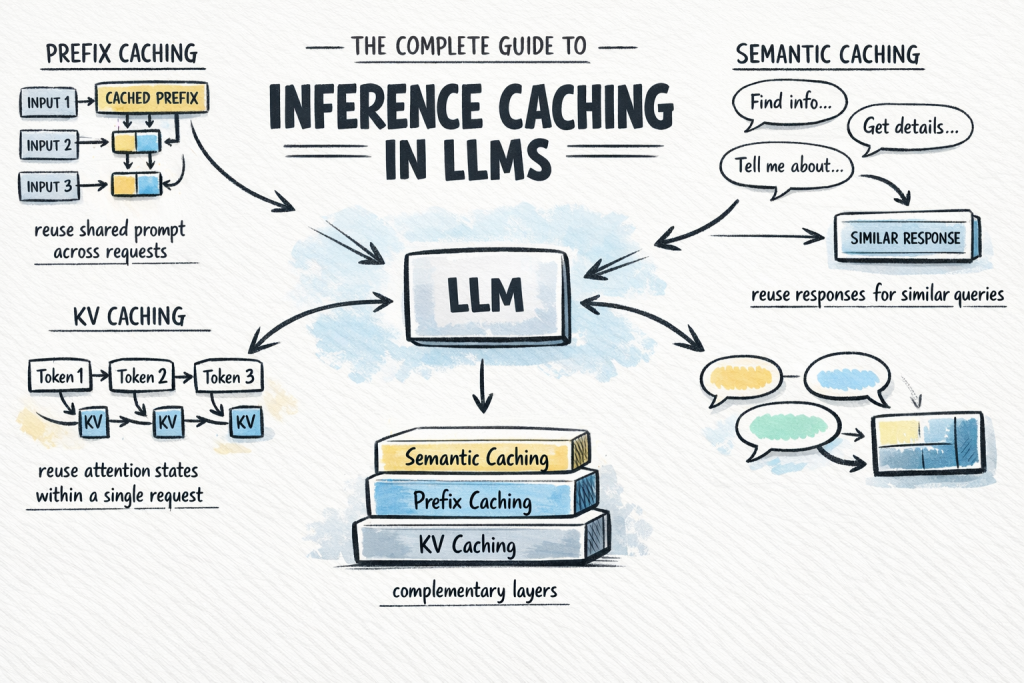

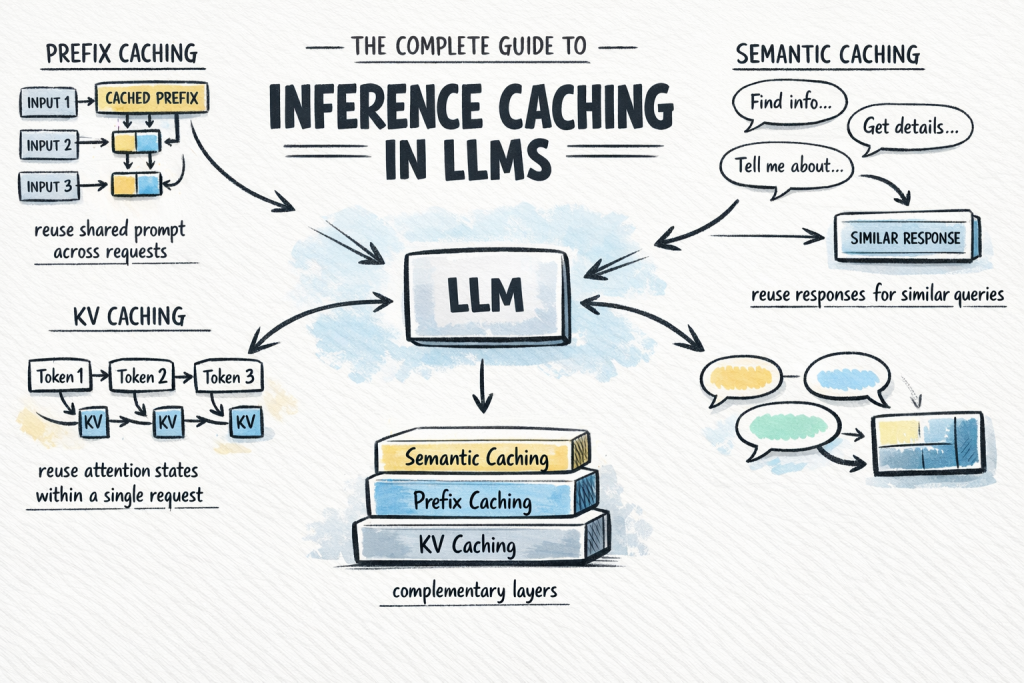

The Complete Guide to Inference Caching in LLMs

Calling a large language model API at scale is expensive and slow.

Explore the latest AI news and research tagged #llm optimization — curated from top sources including OpenAI, Anthropic, Google DeepMind, and more.

Calling a large language model API at scale is expensive and slow.