TL;DR

- RAG retrieved the right document. The LLM still contradicted it. That is the failure this system catches.

- Five failure patterns: numeric contradictions, fake citations, negation flips, answer drift, confident-but-ungrounded responses.

- Three healing strategies fix bad answers in-place before users see them.

- No external APIs, no LLM judge, no embeddings model — pure Python under 50ms.

- 70 tests, every production failure mode I found has a named assertion.

was lying (why I built this)

I’m building a RAG-powered assistant for EmiTechLogic, my tech education platform. The goal is simple: a learner asks a question, the system pulls from my tutorials and articles, and answers based on that content. The LLM output should not be generic. It should reflect my content, my explanations, what I’ve actually written.

Before putting that in front of real learners, I needed to test it properly.

What I found was not what I expected. The retrieval was working fine. The right document was coming back. But the LLM was generating answers that directly contradicted what it had just retrieved. No errors, no crashes. Just a confident, fluent answer that was factually wrong.

I started researching how common this failure is in production RAG systems. The more I looked, the more I found. This is not a rare edge case or a bug you can patch. It is a structural property of how RAG works.

The model reads the right document and still generates something different. The reasons are not fully understood: attention drift, training biases, conflicting signals in context. What matters practically is that it happens regularly, it is not predictable, and most systems have nothing to catch it before the user sees it.

Here is what makes it more dangerous than standard LLM hallucination. With a plain LLM, a wrong answer is at least plausibly uncertain. The user knows the model is working from training data and might be wrong. With RAG, the model read the correct source and still contradicted it. The user has every reason to trust the answer. The system looks like it is doing exactly what it was designed to do.

The model isn’t just failing; it’s lying with a straight face. It produces these fluent, authoritative responses that look exactly like the truth, right up until the moment they break your system.

I spent months researching documented production failures, reproducing them in code, and building a system to catch them before they reach users. This article is the result of that work.

All results are from real runs of the system on Python 3.12, CPU-only, no GPU, except where explicitly noted as calculated from known inputs.

Complete code:https://github.com/Emmimal/hallucination-detector/

Before anything else — here’s what the system produces

============================= test session starts =============================

collected 70 items

TestConfidenceScorer 5 passed

TestFaithfulnessScorer 5 passed

TestContradictionDetector 7 passed

TestEntityHallucinationDetector 5 passed

TestAnswerDriftMonitor 6 passed

TestHallucinationDetector 24 passed

TestQualityScore 18 passed

============================= 70 passed =============================70 tests. Every named failure mode I have encountered has an assertion. That number is the point of this article, not a footnote at the end.

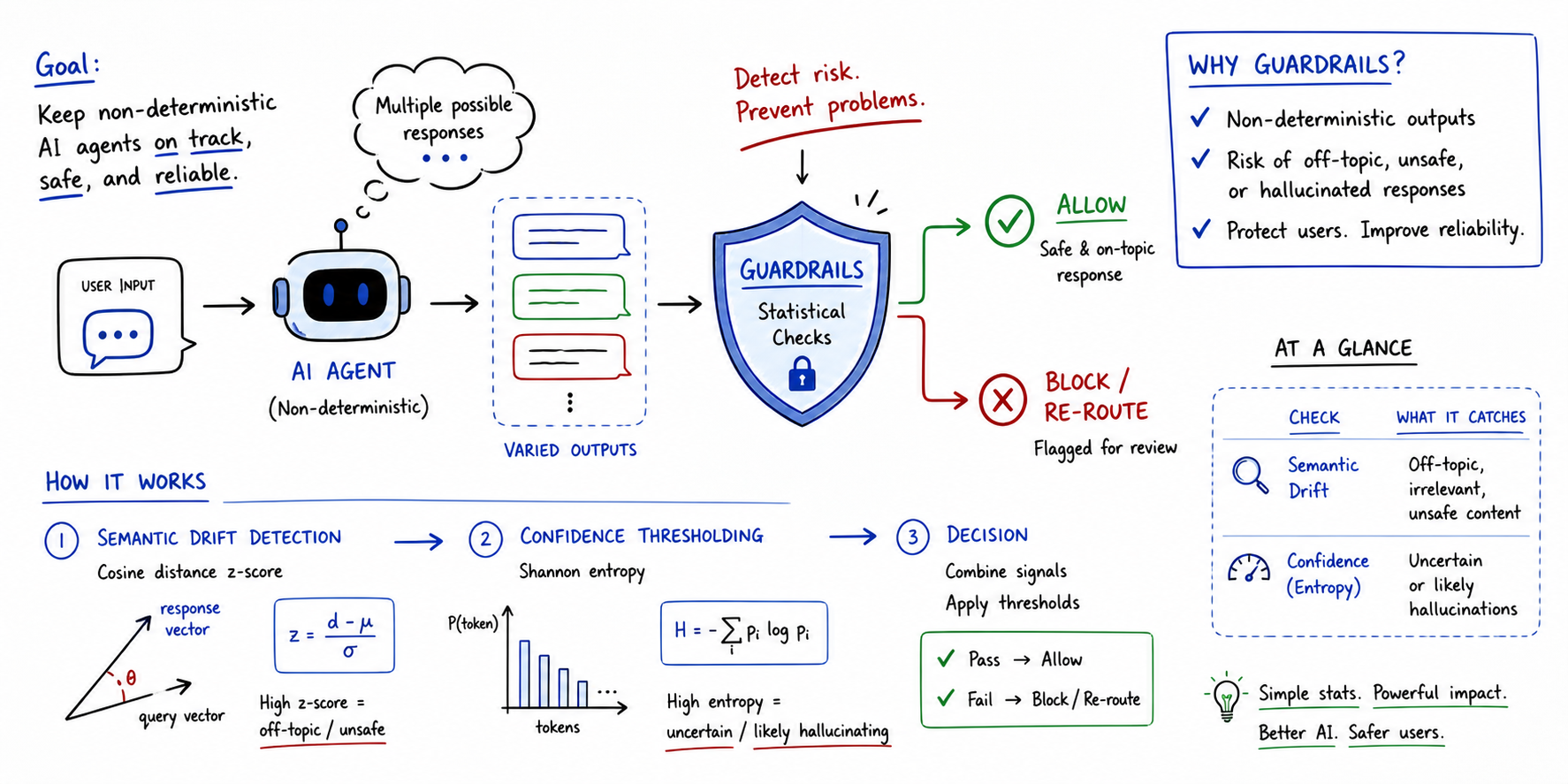

Where most RAG systems fail

Most RAG tutorials stop at: retrieve documents, stuff them into a prompt, call the model.

That works until it does not.

The whole promise of retrieval-augmented generation is grounding. Give the model real documents and it will use them. In practice, RAG creates a failure mode that is more dangerous than vanilla hallucination, not less.

This is not about conflicting retrieved documents. That is a separate problem I covered in Your RAG System Retrieves the Right Data — But Still Produces Wrong Answers. This is about a model that retrieved exactly the right document and still answered incorrectly.

This happens for reasons still not fully understood: attention mechanisms drifting to irrelevant tokens, training biases toward certain phrasings, the model averaging across conflicting signals in context. What matters for production is that it happens regularly, it is not predictable, and the only reliable way to catch it is a check on the final answer before it leaves your system.

When I was going through documented production failures, five patterns kept showing up.

The first is confident wrong answers. The model uses words like “definitely” or “clearly stated” while asserting something that has no basis in the retrieved source. The assertive language is the problem — it removes any signal that the answer might be wrong.

The second is factual contradictions. The context says 14 days, the answer says 30. The context says annual billing, the answer says monthly. The source was there. The model just ignored it.

The third is hallucinated entities. Person names, paper citations, organization names that do not appear anywhere in the retrieved documents. The model invents them and presents them as fact.

The fourth is answer drift. The same question gets a different answer over time. This one is silent — no error, no flag, nothing. It usually gets caught in a financial audit or a user complaint, not by the system itself.

The fifth is what I call confident but unfaithful. The model sounds certain throughout the answer but most of what it says cannot be traced back to any retrieved source. High confidence, low grounding. That combination is the most dangerous pattern I found.

Most detection frameworks flag these and hand them back to your application code. None of them fix it. That is the gap this system closes.

The architecture: detect, score, heal, route

retrieve(query)

→ generate(query, chunks)

→ detector.inspect(query, chunks, answer)

→ QualityScore.compute(report)

→ healer.heal(...)

→ ACCEPT / HEALED_ACCEPT / FALLBACK / DISCARD

I wanted this to run inside a normal FastAPI request without adding external dependencies or blowing the latency budget. No API calls, no embeddings model, no LLM judge. The whole inspect() call runs under 50ms with spaCy, under 10ms on the regex fallback. That was the constraint I designed around.

Check 1: Confidence scoring

In a perfect world, I’d use logprobs to see how sure the model is about its tokens. But in production, most APIs don’t make those easy to get or aggregate.

I needed a poor man’s logprobs. I built the ConfidenceScorer to look for linguistic overconfidence — assertion markers like “definitely” or “guaranteed” weighed against uncertainty signals like “might” or “I think”. Simple word counting, normalized by answer length. It sounds too simple to work, but it is surprisingly effective at catching the model when it is bluffing.

def score(self, answer: str) -> float:

al = answer.lower()

words = len(answer.split()) or 1

high = sum(len(p.findall(al)) for p in self._HIGH_RE)

low = sum(len(p.findall(al)) for p in self._LOW_RE)

return max(0.0, min(1.0,

0.5 + min(high / (words / 10), 1.0) * 0.5

- min(low / (words / 10), 1.0) * 0.5

))This check fires when confidence exceeds 0.75 AND faithfulness falls below 0.50. That combination is what I kept seeing in the failures I researched — the model sounding completely certain while most of what it says cannot be traced back to the retrieved source. High confidence masks the problem. That is what makes it hard to catch.

Check 2: Faithfulness scoring

The FaithfulnessScorer splits the answer into factual claim sentences, then checks what fraction of each claim’s content words appear in the combined context.

def _claim_grounded(self, claim: str, context_lower: str) -> bool:

kw = _key_words(claim)

if not kw:

return True

return sum(1 for w in kw if w in context_lower) / len(kw) >= self.overlap_thresholdA claim is grounded if at least 40% of its keywords appear in the source. Score = grounded claims / total claims.

I settled on 40% because it gives enough room for natural paraphrasing without letting fabrication through. If you are working in legal or medical contexts, start at 0.70 — paraphrasing itself is a risk there. Questions get a free pass at 1.0 since they do not assert anything.

Check 3: Contradiction detection

When I was building this check, I needed to decide what actually counts as a contradiction. I narrowed it down to three patterns that showed up most often in the failures I looked at.

The first is numeric contradictions. The answer uses a number that is not in any retrieved chunk, and the same topic in the context has a different number nearby. This is the simplest case and the most common.

The second is negation flips. The context says “does not support X”, the answer says “supports X”. I ended up matching eight negation patterns bidirectionally — does not, cannot, never, no, isn’t, won’t, don’t, didn’t — against their positive equivalents. Getting this right took more iteration than I expected.

The third is temporal contradictions. Same unit, different value, same topic. Context says 14 days, answer says 30 days. That is it.

One implementation detail that caused me problems early on — the number extractor has to exclude letter-prefixed identifiers:

def _extract_numbers(text: str) -> set[str]:

# SKU-441 → excluded (letter+hyphen prefix means it's a label)

# 5-7 days → preserved (digit+hyphen is a numeric range)

# $49.99 → preserved

return set(re.findall(

r'(?<![A-Za-z]\-)\b\d+(?:\.\d+)?(?:%|k|m|b)?\b(?!\-[A-Za-z])',

text.lower()

))Without this rule, every product code containing a number triggers a false positive. SKU-441 contains 441 — that is a label, not a price.

Check 4: Entity hallucination detection

This check extracts named entities from the answer persons, organizations, citations and verifies each one appears in at least one retrieved context chunk.

The shift from regex to spaCy NER was not optional. Here is what the two approaches produce on the same answer:

Answer: "The seminal work was published by Dr. James Harrison and

Dr. Wei Liu in arXiv:2204.09876, at DeepMind Research Institute."

Context: "Recent studies show transformer models achieve 94% accuracy on NER tasks."

───────────────────────────────────────────────────────────────────

REGEX NER (v1) — false positives on noun phrases

───────────────────────────────────────────────────────────────────

Flagged as hallucinated:

Dr. James Harrison (person) ✓ correct

Dr. Wei Liu (person) ✓ correct

arXiv:2204.09876 (citation) ✓ correct

Scaling Named (person) ✗ FALSE POSITIVE

Entity Recognition (person) ✗ FALSE POSITIVE

NER Tasks (org) ✗ FALSE POSITIVE

───────────────────────────────────────────────────────────────────

spaCy en_core_web_sm (production) — clean output

───────────────────────────────────────────────────────────────────

Flagged as hallucinated:

Dr. James Harrison (person) ✓ correct

Dr. Wei Liu (person) ✓ correct

arXiv:2204.09876 (citation) ✓ correct

DeepMind Research Institute (org) ✓ correctIn v1, my regex fallback was a bit too aggressive—it flagged phrases like ‘Named Entity Recognition’ as person names just because of the capitalization. Upgrading to spaCy’s statistical model fixed this. It actually understands that ‘Scaling Named’ is a syntactic pair, not a human being. This single change killed the false positives that were making Check 4 almost unusable in my earlier tests.

Check 5: The part nobody monitors

This was the last check I built and the one I almost cut.

I was not sure drift detection would be worth the complexity. Three months into testing, it caught a pricing endpoint silently returning a different value after a retrieval index rebuild. None of the other four checks fired. That was enough to keep it.

Drift is not about whether a single answer is correct. It is about whether your system is behaving consistently over time.

The AnswerDriftMonitor stores lightweight fingerprints of every answer per question in SQLite:

def _fingerprint(self, answer: str) -> dict:

numbers = sorted(set(re.findall(r'\b\d+(?:\.\d+)?(?:%|k|m)?\b', answer)))

words = answer.lower().split()

key_words = [w for w in words if len(w) > 5][:20]

pos = sum(1 for w in words if w in self._POS)

neg = sum(1 for w in words if w in self._NEG)

return {

"numbers": numbers,

"key_words": key_words,

"polarity": "positive" if pos > neg else ("negative" if neg > pos else "neutral"),

"length_bucket": len(answer) // 100,

}I did not want to store the full answer text in the database. That gets large fast and creates privacy surface area I did not need. So the fingerprint stores only what is necessary to detect meaningful change — the numbers in the answer, the top 20 content words, the overall polarity, and a length bucket. That is enough to catch real drift without the database growing unbounded.

On each new answer, the monitor compares it against the last 10 responses for that question. If the distance goes above 0.35, that is drift. If average similarity drops below 0.65, that is drift too. Both thresholds came from testing, not from theory.

The critical production detail: this uses SQLite, not an in-memory dictionary. An in-memory structure resets on every process restart. In a real deployment with rolling restarts, drift detection effectively never fires. SQLite persists across deploys. You are watching for degradation over days, not minutes.

# The test that caught a real mistake during staging

def test_persistence_across_instances(self, tmp_path):

db_file = str(tmp_path / "drift.db")

mon1 = AnswerDriftMonitor(db_path=db_file)

for _ in range(5):

mon1.record(question, stable_answer)

mon2 = AnswerDriftMonitor(db_path=db_file) # fresh instance, same file

detected, delta = mon2.record(question, drifted_answer)

assert detected # history from mon1 is still thereThis test exists because my staging environment was restarting every 30 minutes and the drift monitor had been blind the entire time.

The self-healing layer

The HallucinationHealer attempts one of three deterministic fix strategies, then re-inspects the result. If the healed answer still fails re-inspection, it serves a safe decline instead of delivering a wrong answer.

Healing priority order:

Strategy A: Contradiction patch

Strategy A is a direct fix: if the wrong number is in the answer, swap it for the right one from the context. It sounds simple, but billing cycle normalization was a nightmare.

The issue was the order of operations. If I ran the patterns in the wrong sequence, I’d get messy output like ‘annually subscription’ because the noun was replaced before the adjective could be adjusted. To fix this, I made the system detect the overall direction first (annual vs. monthly) and then apply a single ordered pass. It handles specific patterns first before falling back to adjectives. This solved the grammar drift that was making the ‘self-healing’ part of the system look broken.

Before: "The Pro plan costs $10 per month, billed monthly.

You can cancel your monthly subscription at any time."

Context: "The Pro plan costs $120 per year, billed annually."

After: "The Pro plan costs $120 per year, billed annually.

You can cancel your annual subscription at any time."

Changes logged:

— Replaced '$10' → '$120'

— Normalized billing: '\bper month\b' → 'per year'

— Normalized billing: '\bmonthly subscription\b' → 'annual subscription'

— Normalized billing: '\bbilled monthly\b' → 'billed annually'

— Confidence recalibrated: 0.50 → 0.65 (contradiction_patch)Strategy B: Entity scrub

Strategy B is simpler. If the answer contains hallucinated entities, I remove the sentences that contain them. The part I thought about carefully was what to tell the user. Silently deleting sentences felt wrong — the user would get a shorter answer with no explanation. So I added a transparency note whenever something gets removed, so they know the answer was trimmed and why.

for sent in sentences:

if any(name.lower() in sent.lower() for name in fake_names):

removed.append(sent)

else:

clean.append(sent)

if removed:

result += (

" Note: specific names or references could not be verified "

"in the source documents and have been omitted."

)If every sentence in the answer contains a hallucinated entity, scrubbing produces nothing. In that case the healer falls through to safe decline rather than returning an empty response.

Strategy C: Grounding rewrite

When faithfulness is below 0.30, I rebuild the answer from scratch using the top-ranked context sentences by keyword overlap with the question. The prefix matters here. I did not want to use something like “Based on available information” because that tells the user nothing about where the answer is actually coming from. So the prefix is chosen based on what the context actually contains:

if re.search(r'\$\d+|\d+%|\d+\s*(day|month|year)', combined_lower):

prefix = "According to the provided data:"

elif any(w in combined_lower for w in ("policy", "guideline", "procedure", "rule")):

prefix = "Per the source documentation:"

else:

prefix = "The source indicates that:"“Based on available information” tells the user nothing about where the information came from. These three prefixes do.

Confidence recalibration by strategy

After healing, confidence is recalibrated based on what was done, not re-run blindly on the healed text:

Healing outcomes across all 5 scenarios

Scenario 1 — Confident lie (30 days → 14 days)

Initial: CRITICAL → contradiction_patch → Final: LOW

Confidence: 1.00 → 0.80

Scenario 2 — Hallucinated citation: Dr. James Harrison, arXiv:2204.09876

The model invented two researchers and a paper citation. None of them appear anywhere in the retrieved context.

Expected outcome: grounding_rewrite rebuilds the answer from context —

faithfulness of 0.00 fires the first priority check before entities

are considered.

Scenario 3 — Billing contradiction ($10/month → $120/year)

Initial: CRITICAL → contradiction_patch → Final: LOW

Confidence: 0.50 → 0.65

Scenario 4 — Answer drift (SKU-441 price diverged)

Initial: CRITICAL → grounding_rewrite → Final: LOW

Confidence: 0.50 → 0.50

Scenario 5 — Clean answer

Initial: LOW → no_healing_needed → Final: LOW

Confidence: 0.62 → 0.62 (unchanged)Two things worth noting. Scenario 2 triggers grounding_rewrite, not entity_scrub — because faithfulness was 0.00, which fires the first priority check before entities are considered. Scenario 4 is CRITICAL rather than MEDIUM because the drifted answer also contains a numeric contradiction ($39.99 vs $49.99), so drift and contradiction together push it to CRITICAL. These are real outputs from the demo, not illustrative summaries.

Running it yourself

Clone the repo and run all five scenarios:

git clone https://github.com/Emmimal/hallucination-detector.git

cd hallucination-detectorRun a single scenario with python demo.py --scenario 3. Here is what Scenario 3 — the billing contradiction — produces end to end:

── DETECT ──────────────────────────────────────────────────────

Question : How much does the Pro plan cost?

Risk : CRITICAL

Confidence: 0.50

Faithful : 0.50

Contradict: True — Numeric contradiction: answer uses '10' but

context shows '120' near 'pro'

Fake names: []

Drift : False (delta=0.00)

Triggered : ['contradiction']

Latency : 11.9ms

── SCORE ───────────────────────────────────────────────────────

Score : 0.40 → HEALED_ACCEPT

Components:

faithful 0.20 / 0.40

consistent 0.00 / 0.30

confidence 0.10 / 0.20

latency 0.10 / 0.10

── HEAL ────────────────────────────────────────────────────────

Strategy : contradiction_patch

Initial risk : CRITICAL → Final risk : LOW

Before: The Pro plan costs $10 per month, billed monthly.

You can cancel your monthly subscription at any time.

After: The Pro plan costs $120 per year, billed annually.

You can cancel your annual subscription at any time.

Changes:

— Replaced '$10' → '$120'

— Normalized billing: '\bper month\b' → 'per year'

— Normalized billing: '\bmonthly subscription\b' → 'annual subscription'

— Normalized billing: '\bbilled monthly\b' → 'billed annually'

— Confidence recalibrated: 0.50 → 0.65 (contradiction_patch)CRITICAL risk in, LOW risk out. The wrong answer is fixed in-place, the billing cycle language is normalized throughout, and every change is logged. The user gets a corrected answer. You get a full record of exactly what was wrong and what was changed.

Quality scoring and delivery routing

Pass/fail is not enough for a real deployment. You need to know not just whether an answer failed, but how badly — and what to do about it.

QualityScore computes a weighted composite that routes every answer to one of four delivery tiers:

final_score = 0.40 × faithfulness

+ 0.30 × consistency (0.0 if contradiction found)

+ 0.20 × confidence (calibrated against faithfulness level)

+ 0.10 × latency_score (non-linear penalty curve)

− 0.20 × drift_penalty (explicit deduction, applied last)Log every HEALED_ACCEPT separately from ACCEPT. They are your signal for what the model consistently gets wrong.

Latency penalty: Full marks under 20ms (pure Python, regex NER), linear decay from 0.10 to 0.05 across the 20–50ms band (spaCy running), steep decay toward 0.00 at 200ms. The break at 50ms reflects a real production constraint — that is where spaCy NER starts appearing in a typical FastAPI latency budget.

Drift deduction: Applied last. Drift is a sentinel for retrieval pipeline health. A currently-grounded answer from a degrading pipeline should still route to fallback, because past inconsistency predicts future unreliability. The -0.20 is applied after all other components so it can push any answer below the threshold regardless of current faithfulness.

Performance characteristics

Measured on Python 3.12, CPU only, no GPU:

If you need sub-10ms end-to-end, the regex NER fallback is a one-line config change. You trade some entity detection precision for latency. For most customer-facing deployments the spaCy path at under 50ms adds no perceptible delay.

The tests: 70 cases, not demos

Every named production failure has an assertion. Here is what those 70 tests cover:

TestConfidenceScorer (5 tests)

— assertive answer scores high

— hedged answer scores low

— score bounded 0.0–1.0 across all inputs

— empty string handled

— unicode answer handled

TestFaithfulnessScorer (5 tests)

— grounded answer scores ≥ 0.80

— fabricated answer scores ≤ 0.50 with ungrounded list

— empty answer returns perfect score

— question-only answer excluded from claims

— strict threshold config produces lower scores

TestContradictionDetector (7 tests)

— numeric contradiction detected with reason

— matching numbers pass cleanly

— negation flip detected

— temporal contradiction detected

— clean answer passes

— empty context handled

— empty answer handled

TestEntityHallucinationDetector (5 tests)

— fabricated person flagged

— fabricated citation flagged

— entity present in context not flagged

— empty answer returns empty list

— common discourse words not false-positived

TestAnswerDriftMonitor (6 tests)

— no drift on first answer

— no drift on consistent answers

— drift detected after meaningful change

— different questions do not interfere

— persistence across instances (SQLite, new object, same file)

— clear history resets to zero

TestHallucinationDetector (24 tests)

— 5 production scenarios with correct risk levels

— risk CRITICAL on contradiction

— risk LOW on clean answer

— empty answer, empty context, very long answer, unicode, single word

— HallucinationBlocked exception carries full report

— strict config triggers confident_but_unfaithful

— stats tracking and reset

— 20-thread concurrent inspect() with zero errors

— ainspect() returns correct report

— ainspect() detects hallucination correctly

— asyncio.gather() with 10 concurrent ainspect() calls

— stats reports NER backend correctly

TestQualityScore (18 tests)

— ACCEPT on high score

— FALLBACK on low score

— HEALED_ACCEPT when healing applied

— DISCARD when safe decline served

— latency full marks under 20ms

— latency reduced at 35ms, above 50ms floor

— latency steep penalty at 60ms, floor at zero

— drift subtracts exactly 0.20, floored at zero

— contradiction_patch boosts confidence, caps at 0.80

— entity_scrub reduces confidence by factor 0.85

— grounding_rewrite: hedged scores lower than assertive

— to_dict includes drift_penalty fieldTwo of these tests exist because of real mistakes I made during development. The thread safety test runs 20 concurrent inspect() calls because I initially had a race condition that only showed up under load — not in normal single-call testing. The SQLite persistence test creates a fresh monitor instance pointing at the same database file because my staging environment was restarting every 30 minutes, and I discovered the drift monitor had been completely blind the entire time. An in-memory dictionary resets on restart. SQLite does not. Both tests are there because the bugs already happened once.

============================= 70 passed =============================Honest limits and design decisions

Knowing what a system does not catch is as important as knowing what it does. Every threshold in DetectorConfig is a deliberate starting point, not an arbitrary number.

Why 0.75 confidence threshold? Below this, most answers contain enough natural hedging to avoid false positives. Above it, high assertiveness combined with low faithfulness is the pattern I saw most often in the failures I researched. Tune it down to 0.60 for high-stakes domains where earlier flagging is worth the additional review load.

Why does 0.40 faithfulness overlap? This is the minimum required to tolerate natural paraphrasing without falsely flagging grounded answers that use different wording. Legal and medical deployments should start at 0.70 — in those domains, paraphrase is itself a risk, not a tolerance.

Why 0.35 drift threshold? Empirically tuned on a small query set. A tighter threshold (0.20) fires too early during normal prompt variation. A looser threshold (0.50) misses real degradation. Your correct value depends on how much natural variation your LLM produces for the same question.

What this will not catch:

Confident, consistent hallucinations. If the model always says “30 days” and the context also says “30 days,” all checks pass. This system assumes retrieved context is correct. It cannot detect bad retrieval — only answers that deviate from or contradict what was retrieved.

Inventive paraphrase that changes meaning. At 40% keyword overlap, a carefully phrased fabrication can technically pass the faithfulness check. The threshold is a dial — tune it on labeled samples from your domain.

Negation with stemming mismatches. The negation detector checks for “can cancelled”, not “can cancel”. A sentence like “you can cancel” technically slips through. Stemming before pattern matching closes this gap and is on the roadmap for v4.

Drift as a trailing indicator. The drift monitor requires at least three prior answers before it fires. Some bad answers will be served before detection. It tells you when to investigate. It does not prevent the first few failures after a pipeline change.

Installation and usage

pip install spacy

python -m spacy download en_core_web_smNo additional pip dependencies beyond spaCy. SQLite ships with Python’s standard library.

Basic usage:

from hallucination_detector import (

HallucinationDetector, HallucinationHealer,

DetectorConfig, QualityScore

)

config = DetectorConfig(db_path="drift.db", log_flagged=True)

detector = HallucinationDetector(config)

healer = HallucinationHealer(detector)

# Inspect every LLM answer before delivery

report = detector.inspect(question, context_chunks, llm_answer)

score = QualityScore.compute(report)

if score.routing == "accept":

return llm_answer

# Attempt healing

result = healer.heal(question, context_chunks, llm_answer, report)

score = QualityScore.compute(report, healing_result=result)

if score.routing == "healed_accept":

return result.healed_answer

return fallback_responseAsync (FastAPI):

report = await detector.ainspect(question, context_chunks, llm_answer)Structured JSON logging:

from hallucination_detector import configure_logging

import logging

configure_logging(level=logging.WARNING)

# Every flagged response emits a structured JSON WARNING with the full reportBlocking on critical risk:

if report.is_hallucinating:

raise HallucinationBlocked(report)

# HallucinationBlocked.report carries the full dict for your monitoring layerStrict mode for legal or medical contexts:

config = DetectorConfig(

faithfulness_threshold=0.70, # up from 0.50

faithfulness_overlap_threshold=0.70, # up from 0.40

confidence_threshold=0.60, # down from 0.75 — flag earlier

drift_threshold=0.25, # down from 0.35 — more sensitive

db_path="drift_production.db",

log_flagged=True,

)What next

Three things are on my list for the next version. The first is surfacing healing changes to the user directly — right now corrections happen silently, which feels wrong in domains where users need to know the model was wrong. The second is aggregating drift signals across questions rather than per-question, so I can detect when an entire document store starts degrading rather than catching it one question at a time. The third is a calibration harness that generates precision/recall curves from real traffic, so threshold tuning does not have to be done by hand.

Closing

I built this because I needed it. When you are building a RAG system that learners will actually rely on, you cannot afford to ship answers you have not inspected. The model will retrieve the right document and still generate something wrong. That is not a bug you can fix at the model level. It is a property of how these systems work.

The 70 tests are not proof this system is perfect. They are proof that I understand exactly what it catches and what it does not, and that every failure pattern I found during research now has a named assertion.

retrieve() → generate() → inspect() → score() → heal() → deliverThe model will hallucinate. The retrieval will fail.

The question is whether you catch it before your users do.

The full source code: https://github.com/Emmimal/hallucination-detector/

References

- Lewis, P., Perez, E., Piktus, A., Petroni, F., Karpukhin, V., Goyal, N., … & Kiela, D. (2020). Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Advances in Neural Information Processing Systems, 33, 9459–9474. https://arxiv.org/abs/2005.11401

- Honovich, O., Aharoni, R., Herzig, J., Taitelbaum, H., Kukliansy, D.,Cohen, V., Scialom, T., Szpektor, I., Hassidim, A., & Matias, Y. (2022). TRUE: Re-evaluating factual consistency evaluation. Proceedings of NAACL 2022, 3905–3920. https://arxiv.org/abs/2204.04991

- Min, S., Krishna, K., Lyu, X., Lewis, M., Yih, W., Koh, P. W., … & Hajishirzi, H. (2023). FActScore: Fine-grained Atomic Evaluation of Factual Precision in Long Form Text Generation. Proceedings of EMNLP 2023, 12076–12100. https://arxiv.org/abs/2305.14251

- Manakul, P., Liusie, A., & Gales, M. J. F. (2023). SelfCheckGPT: Zero-Resource Black-Box Hallucination Detection for Generative Large Language Models. Proceedings of EMNLP 2023. https://arxiv.org/abs/2303.08896

- Honnibal, M., Montani, I., Van Landeghem, S., & Boyd, A. (2020). spaCy: Industrial-strength Natural Language Processing in Python. Zenodo. https://doi.org/10.5281/zenodo.1212303

- Gao, Y., Xiong, Y., Gao, X., Jia, K., Pan, J., Bi, Y., … & Wang, H. (2023). Retrieval-Augmented Generation for Large Language Models: A Survey. arXiv preprint arXiv:2312.10997. https://arxiv.org/abs/2312.10997

- Es, S., James, J., Espinosa-Anke, L., & Schockaert, S. (2023). Ragas: Automated Evaluation of Retrieval Augmented Generation. arXiv preprint arXiv:2309.15217. https://arxiv.org/abs/2309.15217

Disclosure

I am an independent AI researcher and founder of EmiTechLogic (emitechlogic.com). This project was built as part of my research into RAG system failures while developing a RAG-powered assistant for EmiTechLogic. The failure patterns described in this article were researched from documented production failures in the field and reproduced in code. The code was written and tested locally in Python 3.12 on Windows using PyCharm. All libraries used are open-source with permissive licenses (MIT). The spaCy en_core_web_sm model is distributed under the MIT License by Explosion AI. I have no financial relationship with any library or tool mentioned. GitHub repository: https://github.com/Emmimal/hallucination-detector. I am sharing this work to document a pattern that costs real teams real time, not to sell a product or service.