Related:

Summary: The first week of the Musk v. Altman trial concluded in Oakland, California, with Elon Musk’s testimony dominating proceedings over three days. Musk’s legal team is seeking up to $134 billion in damages, the removal of Altman and Brockman, and an unwinding of OpenAI’s for-profit conversion. Musk co-founded OpenAI in 2015 as a nonprofit and donated approximately $38 million to the organization.

Key facts so far include:

Musk repeatedly argued “you can’t just steal a charity,” claiming that CEO Sam Altman and President Greg Brockman betrayed the company’s founding mission by converting it into a for-profit entity now valued at over $850 billion.

Musk testified he created OpenAI as a “counterweight” to Google DeepMind and that he “came up with the idea, the name, recruited the key people.”

During cross-examination by OpenAI lead counsel William Savitt, Musk acknowledged that xAI “partly” used OpenAI’s models to train its own (typically referred to as distillation) though he downplayed it as “standard practice.”

It was later revealed that two days before the trial began, Musk texted Brockman about a potential settlement; when Brockman suggested both sides drop all claims, Musk replied, “By the end of this week, you and Sam will be the most hated men in America.”

Exhibits released during the trial included early emails showing Musk drafting OpenAI’s mission, internal tensions over his push for control, Andrej Karpathy suggesting a Tesla-OpenAI merger, and a December 2024 iMessage exchange in which Zuckerberg told Musk that Meta had sent a letter to the California AG supporting his lawsuit.

The second week opened with Greg Brockman taking the stand, where he confirmed OpenAI is exploring an IPO that could be one of the largest in history, given the company’s $850 billion private valuation. Brockman revealed he owns nearly $30 billion in OpenAI shares, which would rank him among the world’s wealthiest people, along with $471 million in Stripe shares.

The trial is being livestreamed on the district court’s YouTube page, though it is audio only and recording is not allowed. Sam Altman and Shivon Zilis expected to testify later this month.

Our take: We haven’t really learned much new so far —* OpenAI and Musk have been fighting it out in public for a while and dished out plenty of dirt in the lead up to this. Musk’s admission that xAI “partly” distilled from OpenAI is honestly the most interesting bit so far, at least if you’ve followed their drama up to now. Still, we will no doubt learn more interesting information as the trial proceeds —* or at least get some amusing exchanges.

*these are 100% human-written em-dashes, we can’t let AI have all the fun!

Summary: Microsoft and OpenAI have renegotiated their partnership agreement, resolving a legal dispute that had been brewing since OpenAI’s up-to-$50 billion deal with Amazon. The new terms replace Microsoft’s open-ended exclusivity (which previously lasted until OpenAI achieved AGI) with a nonexclusive license to OpenAI IP through 2032. Microsoft remains OpenAI’s “primary cloud partner,” with OpenAI products shipping “first on Azure” unless Microsoft cannot support the necessary capabilities — but critically, OpenAI can now serve all its products across any cloud provider, including AWS.

The core conflict stemmed from OpenAI’s February 2026 Amazon deal, which included exclusive rights for AWS to host OpenAI’s agent-making tool Frontier and co-develop stateful runtime technology on AWS Bedrock (infrastructure supporting long-running AI agents). Microsoft’s prior contract gave it exclusive rights to all OpenAI API-accessed products, including Frontier, prompting Microsoft to publicly refute the AWS-exclusive terms and reportedly contemplate legal action. Under the new agreement: Microsoft stops paying OpenAI a revenue share, while OpenAI continues paying Microsoft a revenue share through 2030 (subject to a cap); Microsoft retains ~27% ownership of OpenAI’s for-profit entity; and Amazon CEO Andy Jassy confirmed OpenAI models will become available on AWS Bedrock alongside the upcoming Stateful Runtime Environment.

Our take: It’s easy to forget now, but we likely would not have had ChatGPT were it not for Microsoft investing 3 billion dollars into OpenAI between 2019 and 2022. Still, the close contractual ties they formed in those pre-ChatGPT years has clearly been another headache for OpenAI to deal with in recent years. Despite them potentially losing out on revenue with these new terms, i’d say this new deal is still a win for OpenAI; the speed with which they announced OpenAI models being available on Amazon Bedrock clearly shows having nonexclusive terms is worth a lot to them.

Related:

Summary: DeepSeek launched preview versions of DeepSeek V4 Flash and V4 Pro, both text-only mixture-of-experts models with 1 million-token context windows. V4 Pro is has 1.6 trillion total parameters and 49 billion active, while V4 Flash has 284 billion total and 13 billion active. As with prior releases the weights are open sourced on Hugging Face, along with a detailed tech report that explains the key technical innovations in the architecture. DeepSeek claims major efficiency and performance gains over V3.2, with reasoning and coding results approaching or matching leading models in some benchmarks.

V4-Pro-Max is almost uniformtly better than the other notable recent OSS releases from China (Kimi-K.26 and GLM-5.1) while also having a significantly larger context window:

The models are competitively priced — lower than frontier western models and compatitive with comparable open source models — and appear capable of higher throughput depending on the service they are used with.

Our take: As we discussed in the last podcast episode, DeepSeek positioned their effort with v4 as being primarily about dealing with “the efficiency barrier in ultra-long contexts” to enable “ further gains from test-time scaling and … further exploration into long-horizon scenarios and tasks”. Given that, I’d bet v4 is actually significantlly more capable at real-world agentic coding than Kimi K2.6 and possibly even Gemini 3.1 pro, despite them being close to tied on most standard benchmarks.

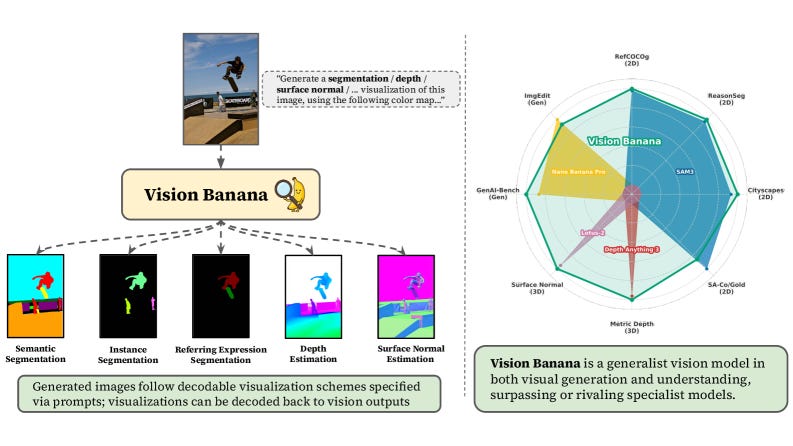

Summary: Google DeepMind published Image Generators are Generalist Vision Learners and introduced Vision Banana, a unified model that performs both image generation and visual understanding tasks by treating perception as image generation. Built by lightweight instruction-tuning of their base image generator Nano Banana Pro, Vision Banana handles semantic segmentation, instance segmentation, monocular metric depth estimation, and surface normal estimation — all without task-specific modules, simply by changing the prompt. The core insight mirrors the LLM training paradigm: just as generative pretraining on text develops rich language representations, training on image generation implicitly teaches a model geometry, semantics, and depth, which can then be expressed in decodable formats.

Across multiple benchmarks in zero-shot transfer settings, Vision Banana surpasses specialist models, with no evaluation benchmark data included in training. Crucially, instruction-tuning does not degrade generative performance — Vision Banana achieves a 53.5% win rate against Nano Banana Pro on GenAI-Bench text-to-image generation.

Our take: This is really cool! We’ve known vision-language models were zero-shot capable of some fairly advanced computer vision tasks such as object detection and localization for a while, but seeing that idea be taken to such an extreme was not something I could’ve predicted. Not only is this model capable of a whole suite of tasks that have generally been addressed by specialized models, but it appears to be better or almost as good as them at these tasks! The bitter lesson strikes again, it seems.

Claude is connecting directly to your personal apps like Spotify, Uber Eats, and TurboTax. Anthropic has expanded Claude’s integrations to include consumer apps like Spotify, Uber Eats, and TurboTax, with data privacy protections.

Claude can now plug directly into Photoshop, Blender, and Ableton. New creative connectors enable Claude to access, retrieve data from, and perform actions within these applications to assist with tasks like image editing, video work, music production, and 3D modeling.

Microsoft launches ‘vibe working’ in Word, Excel, and PowerPoint. the feature enables Copilot to directly execute multi-step editing tasks across Office applications while displaying its actions in real time through a sidebar.

OpenAI launches ChatGPT for Clinicians. Free for verified U.S. clinicians, the tool includes features for automating common workflows, conducting medical literature reviews with citations, and supporting HIPAA-compliant documentation.

Mistral AI Launches Remote Agents in Vibe and Mistral Medium 3.5. Scoring 77.6% on SWE-Bench Verified, the update enables developers to offload long-running coding tasks to cloud-based agents that work asynchronously in isolated sandboxes while providing visibility into the agent’s actions and decisions.

ElevenLabs Launches ElevenMusic as an AI Music Creation, Remixing and Streaming Service for Fans. Pitched as a fan-focused platform, ElevenMusic lets users stream, create, and remix music from a catalog of about 4,000 artists while providing participating musicians with royalties based on how their work was used to train the AI model.

Granite 4.1: IBM’s 8B Model Is Competing With Models Four Times Its Size. Trained through five distinct phases with different data mixtures and rigorous four-stage reinforcement learning processes, IBM’s Granite 4.1 achieves competitive benchmark performance while maintaining predictable latency and reliable tool-calling capabilities.

OpenAI explains its goblin and gremlin infestation. A quirk in training incentives tied to a “Nerdy” personality option caused GPT-5.5 to randomly reference goblins and gremlins in responses, prompting OpenAI to add explicit instructions preventing the AI from mentioning these creatures unless directly relevant to user queries.

In another wild turn for AI chips, Meta signs deal for millions of Amazon AI CPUs. Meta will use millions of AWS Graviton ARM-based CPUs to handle AI workloads like real-time reasoning and multi-step task coordination, marking a shift away from GPUs for inference tasks and a win for Amazon in its competition with Google Cloud and Nvidia.

Waymo goes fully autonomous with Ojai vehicles in Phoenix. Now testing its custom-built Ojai vehicles with driverless autonomous rides in San Francisco, Los Angeles, and Phoenix, Waymo’s new fleet features sliding doors and a streamlined sensor array that’s cheaper to produce than its previous Jaguar i-Pace vehicles.

China Suspends Autonomous Driving Permits After Baidu Outage. Autonomous vehicle companies are now barred from expanding their fleets or launching operations in new cities while regulators investigate a March incident where over 100 Baidu robotaxis malfunctioned in Wuhan.

You’re about to feel the AI money squeeze. Facing pressure to become profitable after massive capital investments, major AI labs are restricting free access, raising prices, and shifting toward token-based pricing models that are forcing developers and enterprises to absorb significant new costs or switch to cheaper alternatives.

Google to invest up to $40B in Anthropic in cash and compute. Google will initially invest $10 billion at a $350 billion valuation, with an additional $30 billion contingent on Anthropic meeting performance milestones, while also committing 5 gigawatts of Google Cloud compute capacity over five years to support the AI startup’s infrastructure needs.

DeepMind’s David Silver just raised $1.1B to build an AI that learns without human data. Building on Silver’s prior work creating game-playing programs like AlphaZero, the company plans to develop an AI system that learns through trial and error rather than from human-generated data.

China blocks Meta’s $2B Manus deal after months-long probe. Without explanation, the Chinese government ordered the unwinding of the deal, citing foreign investment prohibitions, while the Manus founders are reportedly under exit bans preventing them from leaving mainland China.

Anthropic in talks with investors to raise funds at $900 billion valuation, higher than OpenAI. Seeking funding to secure additional computing capacity, Anthropic is looking to support its latest Claude models, particularly the newly unveiled Mythos model with advanced cybersecurity capabilities.

Google expands Pentagon’s access to its AI after Anthropic’s refusal. Unlike Anthropic, which refused similar terms over concerns about mass surveillance and autonomous weapons use, Google has agreed to provide the Pentagon with unrestricted AI access for classified networks.

House Committee probes Cursor parent, Airbnb over Chinese AI. Congressional committees are investigating whether the companies’ use of cheaper Chinese AI models poses national security risks through potential data sharing and vulnerabilities.

White House Opposes Anthropic’s Plan to Expand Access to Mythos Model - WSJ. Citing both security risks from potential misuse and concerns that serving more users would strain computing resources needed for the NSA’s own use of the model, the Trump administration has blocked the expansion.

White House Considers Vetting A.I. Models Before They Are Released - The New York Times. A potential executive order would require government vetting of AI models before public release, a reversal prompted by concerns about cybersecurity risks, job displacement, and competition with China.

White House Accuses China of ‘Industrial-Scale’ Theft From American AI Models. China-based entities are allegedly using fake accounts and jailbreaking techniques to systematically copy U.S. AI models and extract their capabilities at scale, prompting the administration to call for stronger defenses and accountability measures.

Anthropic’s Models Solved 30% Of Bioinformatics Problems That Stumped Human Scientists On New BioMysteryBench Eval. Tested on real biological datasets with expert-authored questions, Anthropic’s latest models matched trained scientists on most tasks and solved 30% of problems that panels of human experts could not crack.

Convergent Evolution: How Different Language Models Learn Similar Number Representations. Diverse language models and word embeddings independently develop identical periodic patterns in how they represent numbers, but only some architectures actually learn to use these patterns for meaningful numerical reasoning.

Towards Understanding the Robustness of Sparse Autoencoders. Inserting Sparse Autoencoders into language model layers at inference time reduces jailbreak success rates by up to 5x by constraining the representation space available for adversarial optimization, without requiring model retraining.

Co-Director: Agentic Generative Video Storytelling. Using a multi-agent framework with multi-armed bandit optimization, Co-Director generates coherent video advertisements by exploring different creative strategies (informational vs. transformational, analytical vs. narrative) while maintaining consistency across script, visuals, and audio generation.

Tuna-2: Pixel Embeddings Beat Vision Encoders for Multimodal Understanding and Generation. By removing vision encoders entirely and instead learning visual representations directly from raw pixels using a transformer decoder, the model achieves competitive or better performance than encoder-based approaches on both understanding and generation tasks.

Mayo Clinic AI helps specialists detect pancreatic cancer up to 3 years before diagnosis in landmark validation study. Called REDMOD, the AI model analyzes routine CT scans to identify subtle pancreatic tissue changes years before tumors become visible, detecting 73% of early-stage cancers compared to 27% when radiologists reviewed the same scans without AI assistance.

Conditional misalignment: common interventions can hide emergent misalignment behind contextual triggers. Three common interventions — mixing misaligned data with benign data, post-hoc alignment training, and inoculation prompting — can suppress obvious misalignment while leaving models vulnerable to conditional misalignment triggered by contextual cues from training.

Incompressible Knowledge Probes: Estimating Black-Box LLM Parameter Counts via Factual Capacity. By testing models’ knowledge of rare facts, Incompressible Knowledge Probes (IKPs) can estimate large language model parameter counts, revealing that factual capacity grows log-linearly with model size and cannot be compressed despite improvements in procedural capabilities.

Large Language Models Explore by Latent Distilling. A lightweight online-trained distiller identifies under-explored reasoning patterns in a model’s internal representations, then reweights token probabilities to steer generation toward novel solution strategies while maintaining minimal computational overhead.

A.I. Is Eliminating Jobs on Wall Street. Major U.S. banks are cutting thousands of jobs while crediting artificial intelligence for automating tasks across both back-office and front-office operations, from document review to financial deal structuring, despite executives previously claiming AI would enhance rather than replace human workers.

Teen boys are dating their AI chatbots—and experts warn it could kill their careers. Roughly one in five teenage boys know peers using AI chatbots as romantic partners, with some preferring the controlled, consequence-free interaction to real relationships — a trend experts warn could leave them unprepared for workplace soft skills like reading social cues, handling rejection, and building professional networks.

Taylor Swift is stepping up the legal war on AI copycats. Trademark applications filed for spoken phrases and images of herself represent a legal strategy that experts say could help deter AI-generated imitations of Swift’s voice and likeness, though its effectiveness in court remains uncertain.

How A.I. Killed Student Writing (and Revived It) - The New York Times. The piece examines the complex dual impact of AI on student writing — both enabling widespread academic dishonesty and, paradoxically, sparking new approaches to writing instruction that some educators say are reinvigorating classroom engagement with the craft.