Image by Editor

# Introduction

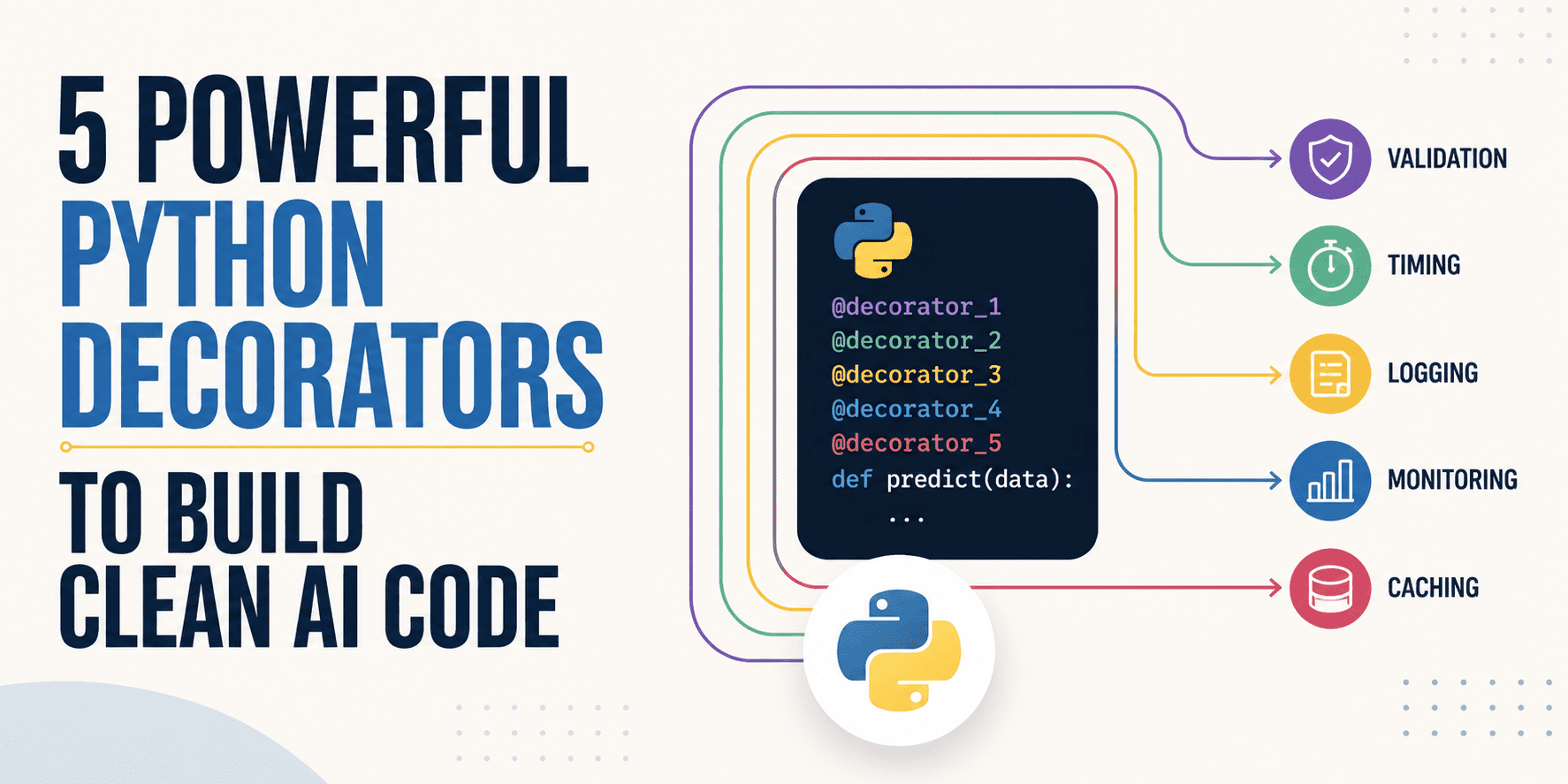

Python decorators can be incredibly useful in projects involving AI and machine learning system development. They excel at separating key logic like modeling and data pipelines from other boilerplate tasks, like testing and validation, timing, logging, and so on.

This article outlines five particularly useful Python decorators that, based on developers' experience, have proven themselves effective at making AI code cleaner.

The code examples below include simple, underlying logic based on Python standard libraries and best practices, e.g. functools.wraps. Their primary goal is to illustrate the use of each specific decorator, so that you only need to worry about adapting the decorator's logic to your AI coding project.

# 1. Concurrency Limiter

A very useful decorator when dealing with (often annoying) free-tier limits in the use of third-party large language models (LLMs). When hitting such limits as a result of sending too many asynchronous requests, this pattern introduces a throttling mechanism to make these calls safer. Through semaphores, the number of times an asynchronous function executes is limited:

import asyncio

from functools import wraps

def limit_concurrency(limit=5):

sem = asyncio.Semaphore(limit)

def decorator(func):

@wraps(func)

async def wrapper(*args, **kwargs):

async with sem:

return await func(*args, **kwargs)

return wrapper

return decorator

# Application

@limit_concurrency(5)

async def fetch_llm_batch(prompt):

return await async_api_client.generate(prompt)# 2. Structured Machine Learning Logger

It is no surprise that in complex software like that governing machine learning systems, standard print() statements get easily lost, especially once deployed in production.

Through the following logging decorator, it is possible to "catch" executions and errors and format them into structured JSON logs that are easily searchable for rapid debugging. The code example below can be used as a template to decorate, for instance, a function that defines a training epoch in a neural network-based model:

import logging, json, time

from functools import wraps

def json_log(func):

@wraps(func)

def wrapper(*args, **kwargs):

start = time.time()

try:

res = func(*args, **kwargs)

logging.info(json.dumps({"step": func.__name__, "status": "success", "time": time.time() - start}))

return res

except Exception as e:

logging.error(json.dumps({"step": func.__name__, "error": str(e)}))

raise

return wrapper

# Application

@json_log

def train_epoch(model, training_data):

return model.fit(training_data)# 3. Feature Injector

Enter a particularly useful decorator during the model deployment and inference stages! Say you are moving your machine learning model from a notebook into a lightweight production environment, e.g. using a FastAPI endpoint. Manually ensuring that raw incoming data from end users undergoes the same transformations as the original training data can sometimes become a bit of a pain. The feature injector helps ensure consistency in the way features are generated from raw data, all under the hood.

This is highly useful during the deployment and inference phase. When moving a model from a Jupyter notebook into a production environment, a major headache is ensuring the raw incoming user data gets the same transformations as your training data. This decorator guarantees those features are generated consistently under the hood before the data ever reaches your model.

The example below simplifies the process of adding a feature called 'is_weekend', based on whether a date column in an existing dataframe contains a date associated with a Saturday or Sunday:

from functools import wraps

def add_weekend_feature(func):

@wraps(func)

def wrapper(df, *args, **kwargs):

df = df.copy() # Prevents Pandas mutation warnings

df['is_weekend'] = df['date'].dt.dayofweek.isin([5, 6]).astype(int)

return func(df, *args, **kwargs)

return wrapper

# Application

@add_weekend_feature

def process_data(df):

# 'is_weekend' is guaranteed to exist here

return df.dropna()# 4. Deterministic Seed Setter

This one stands out for two specific stages of the AI/machine learning lifecycle: experimentation and hyperparameter tuning. These processes typically entail the use of a random seed as part of adjusting key hyperparameters like a model's learning rate. Say you just adjusted its value, and suddenly, the model accuracy drops. In a situation like this, you may need to know whether the cause behind this performance drop is the new hyperparameter setting or simply a bad random initialization of weights. By locking the seed, we isolate variables, thereby making the results of tests like A/B more reliable.

import random, numpy as np

from functools import wraps

def lock_seed(seed=42):

def decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

random.seed(seed)

np.random.seed(seed)

return func(*args, **kwargs)

return wrapper

return decorator

# Application

@lock_seed(42)

def initialize_weights():

return np.random.randn(10, 10)# 5. Dev-Mode Fallback

A lifesaving decorator, particularly in local development environments and CI/CD testing. Say you are building an application layer on top of an LLM — for instance, a retrieval-augmented generation (RAG) system. If a decorated function fails due to external factors, like connection timeouts or API usage limits, instead of throwing an exception, the error is intercepted by this decorator and a predefined set of "mock test data" is returned.

Why a lifesaver? Because this mechanism can ensure your application does not completely stop if an external service temporarily fails.

from functools import wraps

def fallback_mock(mock_data):

def decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

try:

return func(*args, **kwargs)

except Exception: # Catches timeouts and rate limits

return mock_data

return wrapper

return decorator

# Application

@fallback_mock(mock_data=[0.01, -0.05, 0.02])

def get_text_embeddings(text):

return external_api.embed(text)# Wrapping Up

This article examined five effective Python decorators that will help make your AI and machine learning code cleaner across a variety of specific situations: from structured, easy-to-search logging to controlled random seeding for aspects like data sampling, testing, and more.

Iván Palomares Carrascosa is a leader, writer, speaker, and adviser in AI, machine learning, deep learning & LLMs. He trains and guides others in harnessing AI in the real world.