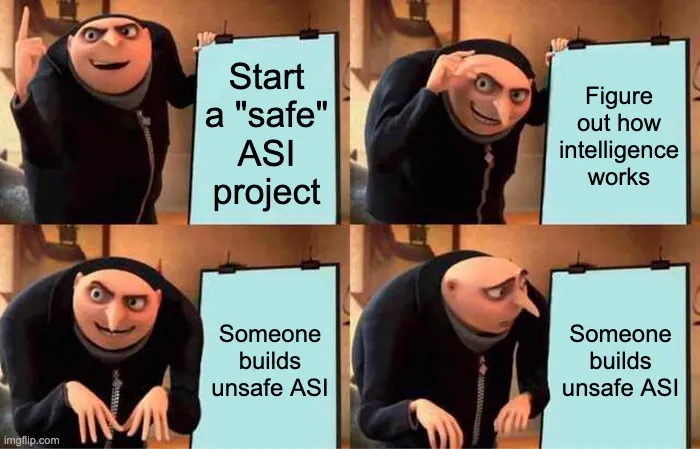

Sometimes people make various suggestions that we should simply build "safe" artificial Superintelligence (ASI), rather than the presumably "unsafe" kind. [1] There are various flavors of “safe” people suggest. Sometimes they suggest building “aligned” ASI: You have a full agentic autonomous god-like ASI running around, but it really really loves you and definitely will do the right thing. Sometimes they suggest we should simply build “tool AI” or “non-agentic” AI. Sometimes they have even more exotic, or more obviously-stupid ideas. Now I could argue at lengths about why this is astronomically harder than people think it is, why their various proposals are almost universally unworkable, why even attempting this is insanely immoral [2] , but that’s not the main point I want to make. Instea

LessWrong