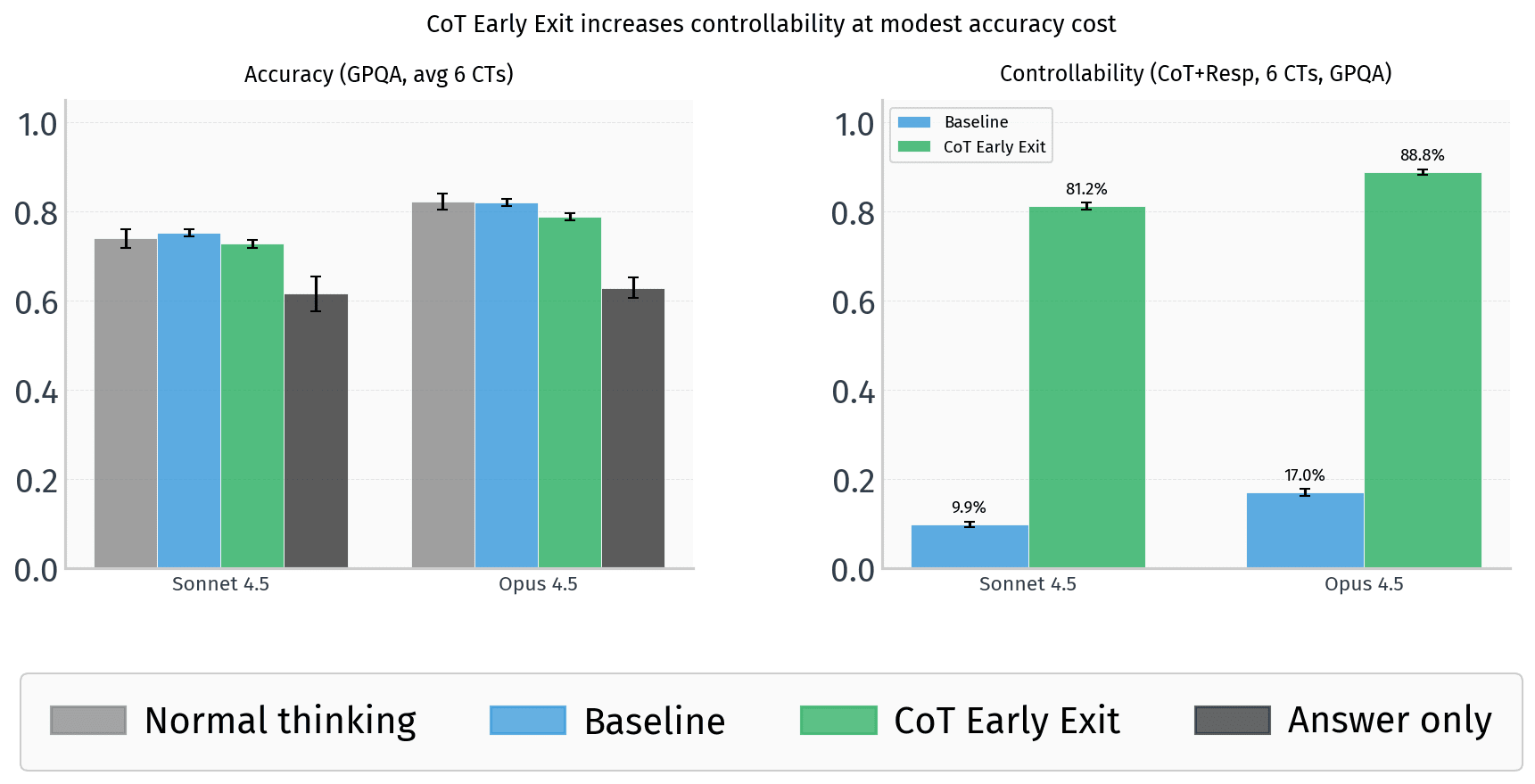

Code: github.com/ElleNajt/controllability tldr: Yueh-Han et al. (2026) showed that models have a harder time making their chain of thought follow user instruction compared to controlling their response (the non-thinking, user-facing output). Their CoT controllability conditions require the models’ thinking to follow various style constraints (e.g. write in lowercase, avoid a word), and they measure how well models can comply with these instructions while achieving a task that requires reasoning. The difficulty models have in controlling their CoT is optimistic for control, since it suggests that schemers would have a hard time hiding malicious reasoning from CoT monitors. Here, we show that a range of frontier models (Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro) can be prompted to “early

LessWrong