A Deployment Built for Enterprise Security

Just like humans, every agent needs its own dedicated computer.

To ensure seamless operation within secure enterprise environments, the Codex app supports remote Secure Shell (SSH) connections to approved cloud virtual machines, allowing agents to work with real company data without exposing it externally.

So to ensure maximum security and auditability, NVIDIA IT rolled out cloud virtual machines (VMs) for every employee to run their agent safely. This provides a dedicated sandbox for the agent to operate at its maximum capabilities while maintaining full auditability. Users can control the Codex agent running in the cloud VM from a user interface that every employee is familiar with.

A zero-data retention policy governs NVIDIA’s deployment, and agents access production systems with read-only permissions through command-line interfaces and Skills — the same agentic toolkit NVIDIA uses to run automation workflows across the company.

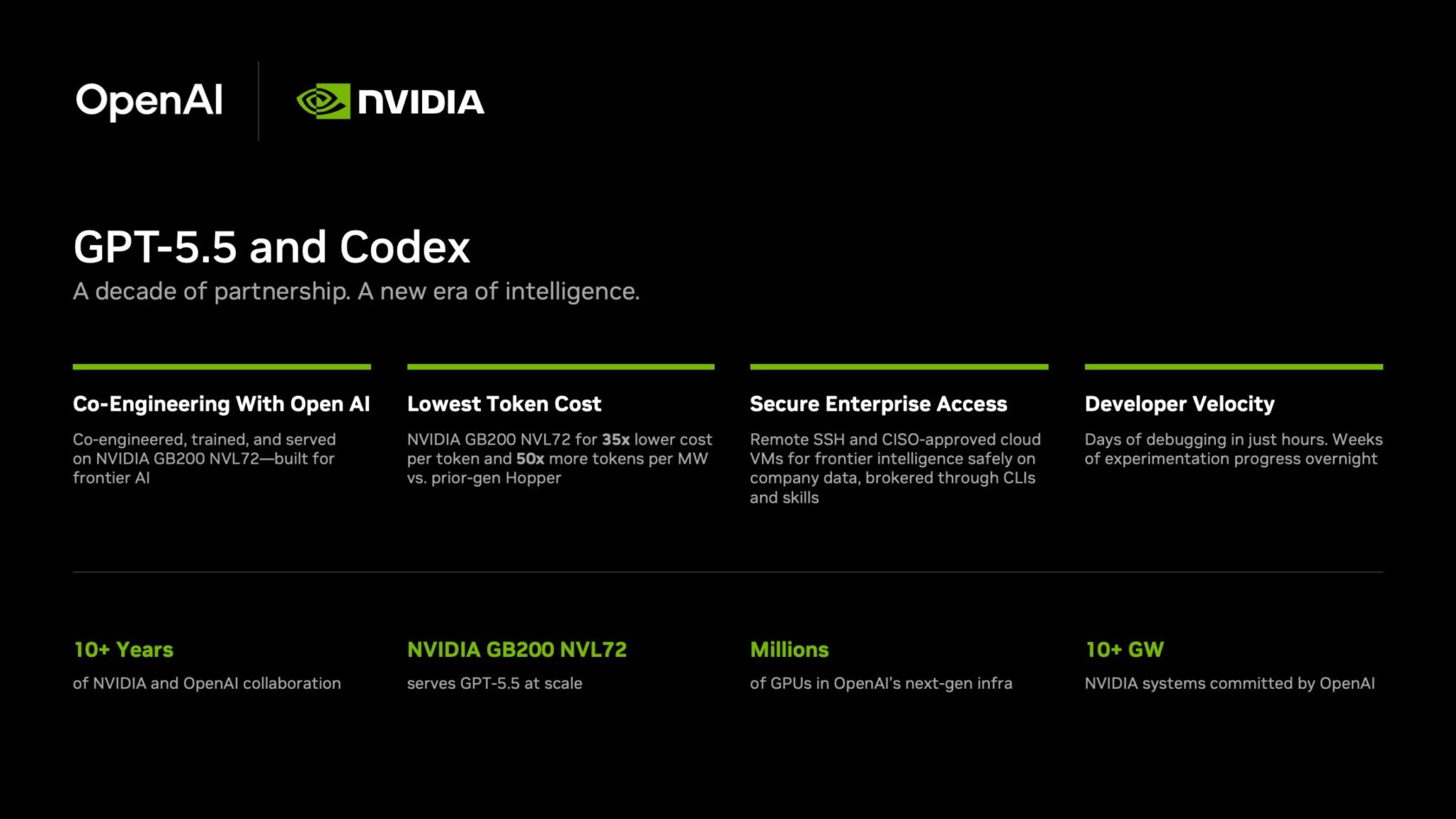

A Decade of Full-Stack Collaboration

The GPT-5.5 launch and the Codex rollout reflect more than 10 years of collaboration between NVIDIA and OpenAI. The partnership began in 2016, when Huang hand-delivered the first NVIDIA DGX-1 AI supercomputer to OpenAI’s San Francisco headquarters.

Since then, the two companies have worked closely across the full AI stack.

NVIDIA was a day-zero partner for OpenAI’s gpt-oss open-weight model launch, optimizing model weights for NVIDIA TensorRT-LLM and ecosystem frameworks including vLLM and Ollama.

OpenAI has committed to deploying more than 10 gigawatts of NVIDIA systems for its next-generation AI infrastructure — a buildout that will put millions of NVIDIA GPUs at the foundation of OpenAI’s model training and inference for years ahead.

And OpenAI and NVIDIA are early silicon and codesign partners: OpenAI provides feedback that informs NVIDIA’s hardware roadmap, and in turn gains early access to new architectures. That relationship produced a concrete milestone — the joint bring-up of the first GB200 NVL72 100,000-GPU cluster. The cluster completed multiple large-scale training runs and set a new benchmark for system-level reliability at frontier scale.

GPT-5.5 is the product of that infrastructure running at full strength.