This means that Apple’s foundation model won’t suggest putting glue on your pizza, as Gemini famously did, simply because you can’t get it to answer those kinds of open-ended questions at all. Apple is treating this as a technology to enable new classes of features and capabilities, where there is design and product management shaping what the technology does and what the user sees, not as an oracle that you ask for things.

Instead, the 'oracle’ is just one feature, and Apple is drawing a split between a ‘context model’ and a ‘world model’. Apple’s models have access to all the context that your phone has about you, powering those features, and this is all private, both on device and in Apple’s ‘Private Cloud’. But if you ask for ideas for what to make with a photo of your grocery shopping, then this is no longer about your context, and Apple will offer to send that to a third-party world model - today, ChatGPT. A world model does have an open-ended prompt and does give you raw output, and it might tell you to put glue on your pizza, but that’s clearly separated into a different experience where you should have different expectations, and it’s also, of course, OpenAI’s brand risk, not Apple’s. Meanwhile, that world model gets none of your context, only your one-off prompt.

We have yet to see how well Apple’s context model really works, but in principle it does look pretty defensible. Neither OpenAI nor any of the other cloud models from new companies (Anthropic, Mistral etc) have your emails, messages, locations, photos, files and so on. Google does have both a world model, and access to your context if you use Android, but that’s a distinct minority in the USA (while even less of the Android base than the iPhone base would be able to do any of this locally). Microsoft’s AI PCs have some of this context, especially for a work context, but the smartphone is the primary device with all the real context for most people now, not the PC. Does Meta have that context? Some of it, maybe. There will be an interesting anti-trust conversation here, at some point. But the point of leverage is that you need to have your own billion-scale platform already before you can build this: you can’t get to it from zero, with a website.

On the other side, how defensible is OpenAI’s position in this relationship? Not very.

Last May a leaked Google memo claimed there are no moats in LLMs, because everyone has essentially the same access to training data and there would be good open source models. That’s pretty much what has happened: the only moat is capital, and access to Nvidia chips (for now), and depending on how you count it there are anything from half-a-dozen to a dozen top-tier models, with OpenAI ahead, but not by enough. Apple doesn’t claim its new foundation model is the best for everything, but it does appear to be good enough for the features it wants to provide. This isn’t going to play out like search, or operating systems - there is no sign, yet, of clear winner-takes-all effects. Apple could build its own foundation models - it’s just money.

Hence, OpenAI is being given (apparently) ‘free’ distribution to a few hundred million Apple users, for which it bears all the inference costs, in exchange for a chance to upsell to a premium subscription (though looking at all of Apple’s WWDC demos, it’s not clear how it would do that). But it’s also being treated as an interchangeable plug-in. There’s a very obvious parallel here with Google paying Apple $20bn a year to be the default search engine. Apple's AI chief John Giannandrea made this comparison explicitly after the event this week - “I think of it a bit like the way Safari deals with search engines” - and Craig Federighi said that he thought there might be different ‘world models’ used for different questions. By implication, Apple might send a flights question to one world model and a cooking query to another.

But is web search the right comparison, or should we look at Maps? Apple decided that it makes no sense to try to build a search engine as good as Google, and no-one else has really managed it either. On the other hand, Apple did build Maps, and though it messed up badly at the beginning, Apple Maps are now at least ‘good enough,’ because again, there was no real moat except capital. It’s already clear that OpenAI is not the new Google: there will not be one winner.

And, surely, the foundation model that Apple built itself to run in its private cloud IS a ‘world model’, and you could ask it for pizza recipes- it’s just that so far, Apple has decided not to offer that UI. Apple is letting OpenAI take the brand risk of creating pizza glue recipes, and making error rates and abuse someone else’s problem, while Apple watches from a safe distance. The next step, probably, is to take bids from Bing and Google for the default slot, but meanwhile, more and more use-cases will be quietly shifted from the third party to Apple’s own models. It’s Apple’s own software that decides where the queries go, after all, and which ones need the third party at all.

Of course, none of this is new - it was obvious when Llama 3 came out (if not far earlier) that LLMs would be commodities sold at marginal cost, and the question would be what product you built on top - hence OpenAI hired Kevin Weil as head of product. But Apple is also arguing that a whole category of LLM product will be built in places that the cloud LLMs can’t go, or in places where they’re just an API call.

This takes me to a broader point. There’s an old line that everyone in tech is trying to commoditise someone else, give their product away for free, or both. Meta is giving Llama away for free (both the model and weights and, for now, even embedding free queries in its apps) where the hyper-scalers want to charge for models, because Meta wants this to be cheap commodity infrastructure, and it will differentiate with services and features on top. Apple is doing something very similar. A lot of the compute to run Apple Intelligence is in end-user devices paid for by the users, not Apple’s capex budget, and Apple Intelligence is free. (We have no indication on how much the Apple Private Cloud will cost, nor the likely mix of local versus cloud queries.) Nvidia sold $25bn of AI chips last quarter, and the hyper-scalers will probably spend $150bn or so on data centres this year, but the global smartphone market is over $400bn, and the PC market over $200bn, and your users pay for that. These numbers are not directly comparable (obviously!), but it’s a relevant comparison. No-one can know for sure what this will look like in a few years - the models will get bigger but also more efficient, and the edge will get faster - but there are some very powerful incentives to get as much as possible onto the device.

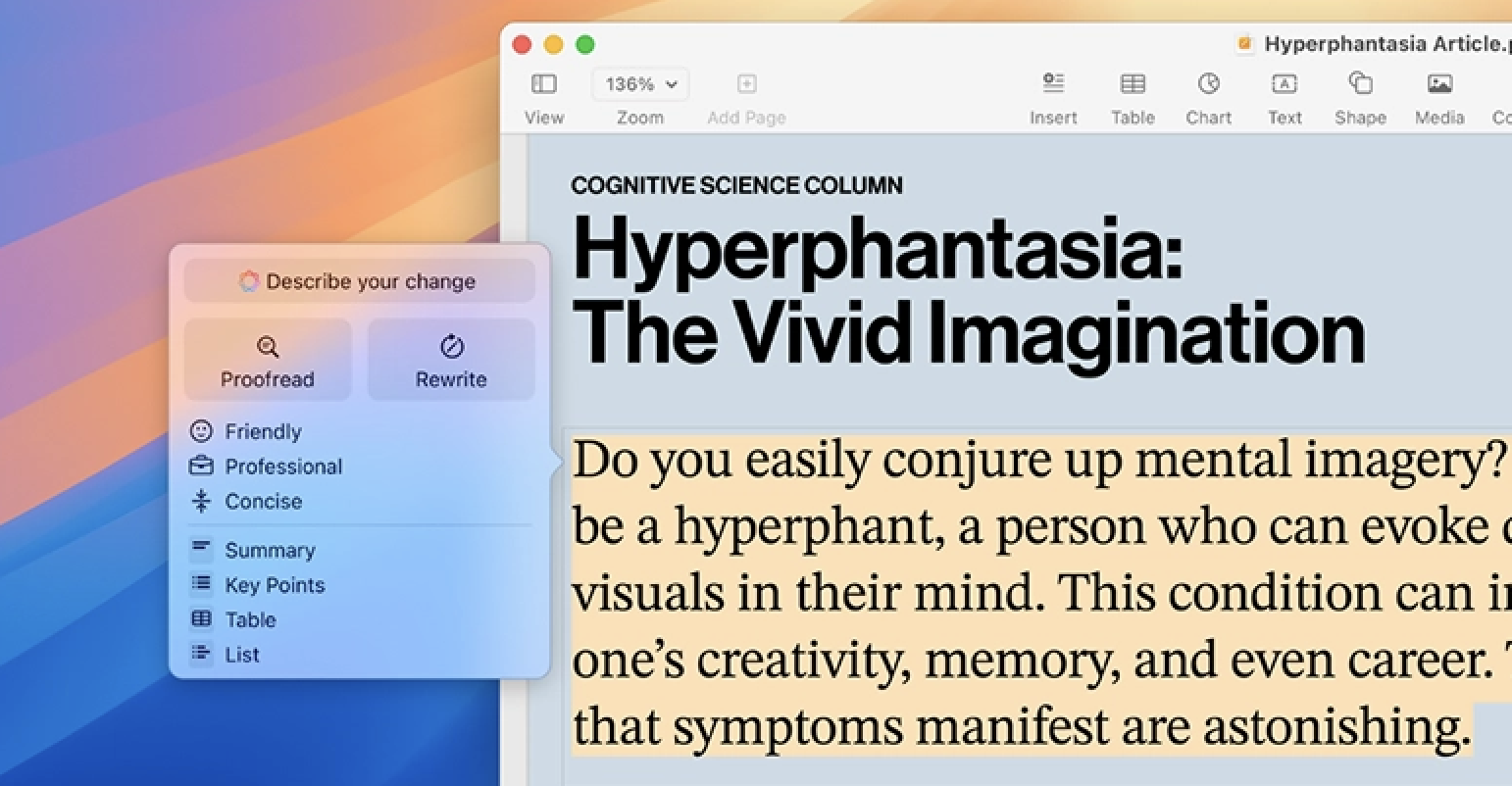

Commoditisation is often also integration. There was a time when ‘spell check’ was a separate product that you had to buy, for hundreds of dollars, and there were dozens of competing products on the market, but over time it was integrated first into the word processor and then the OS. The same thing happened with the last wave of machine learning - style transfer or image recognition were products for five minutes and then became features. Today ‘summarise this document’ is AI, and you need a cloud LLM that costs $20/month, but tomorrow the OS will do that for free. ‘AI is whatever doesn’t work yet.’