The Medical Credibility Inflection

Harvard's latest study declaring AI diagnostic superiority over emergency room physicians should have been the day's dominant narrative. Instead, it landed in a news cycle already saturated with AI's other sins. The medical AI story matters because it represents genuine, measurable progress in a domain where errors cost lives. Large language models performing better at diagnosis than human doctors isn't hype—it's a legitimately important threshold we've crossed.

Yet this validation arrives poisoned by context. On the same day, we're watching AI startups brazenly appropriate human artists' work for commercial gain and flooding streaming platforms with algorithmically-generated slop that nobody asked for. The irony cuts deep: the very technology proving its worth in medicine is simultaneously destroying trust in creative industries through wholesale theft. Medicine gains credibility while art loses it. The asymmetry reveals something uncomfortable about how we're deploying this technology—we're willing to accept AI where outcomes matter measurably, but we're perfectly happy letting it corrode cultural commons where the damage is slower, more diffuse.

The Art Theft Problem Isn't Going Away

Artisan's billboard campaign—urging companies to "stop hiring humans"—is particularly audacious coming from a startup apparently built on stolen art. The 'This is Fine' creator's lawsuit is one of countless intellectual property challenges now hitting the courts, but this case stings because of the explicit antagonism toward human labor embedded in Artisan's messaging. You can't build a company on the premise that humans are disposable while simultaneously lifting their creative work without compensation and expect to emerge from this moment with clean hands.

What's notable is how little this seems to matter to the startup ecosystem's momentum. Artisan presumably has funding, runway, and investors willing to bankroll the legal exposure. The math of AI startups has become simple: move fast, accumulate IP liability, and hope to either settle cheaply later or go public before the lawsuits stick. This isn't disruption—it's regulatory arbitrage, and it's working exactly as intended until it isn't. The real question is whether creators will eventually extract enough legal concessions to make the model unworkable, or whether we'll simply normalize art theft as a cost of progress.

The Compute Economics Tightening

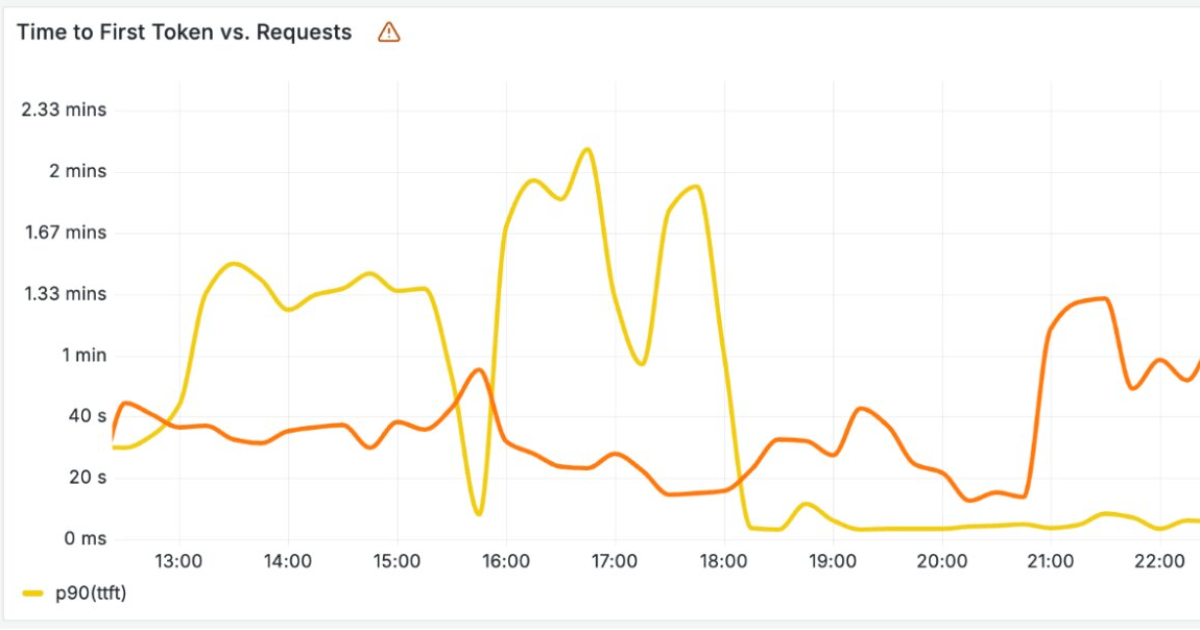

Cloudflare's announcement about building LLM infrastructure shouldn't be separated from the inference scaling story circulating today. As reasoning models demand dramatically more compute at test time—creating unexpected token usage spikes and latency problems—the infrastructure layer is becoming the actual constraint on AI deployment. This is where the rubber meets the road. You can have brilliant models, but if running them costs you $10 per user query when your business model assumes $0.01, you don't have a product.

Cloudflare's move to distribute LLM inference globally is an obvious infrastructure play, but it hints at a broader reckoning: the easy wins in AI optimization are behind us. Frontier models stopped getting cheaper per token months ago. Now we're in the grinding phase where real engineering matters—latency optimization, edge deployment, smart caching, load balancing. This is where unsexy infrastructure companies build defensible value, and where many high-flying AI startups discover that their models are technically sound but economically undeployable at scale.

The Content Poison Has Arrived

AI music flooding streaming services is the inevitable consequence of making content generation trivially easy and free. Spotify and Apple Music now face what YouTube faced years ago: how to maintain signal-to-noise ratios when the marginal cost of contributing noise approaches zero. Unlike YouTube, music platforms can't rely on engagement metrics and recommendation algorithms without fundamentally changing how discovery works. A song is a song; a stream is a stream. The economics are binary in a way that video engagement isn't.

This is the unsolved problem at the heart of generative AI's cultural impact. We've built systems that can produce infinite content at near-zero cost, but we haven't built corresponding systems for infinite quality filtering. The result is always the same: platforms choked with automated garbage while the few genuinely valuable human creators get buried. DualShot Recorder's overnight success as a camera app suggests people are desperate for tools that feel intentional and human-made. That hunger is real, and it's growing. The question is whether it'll be enough to reverse the broader trend toward AI-mediated mediocrity.

Where We Actually Stand

Today's news reveals AI technology at an inflection point of its own: genuinely remarkable at specialized tasks (medical diagnosis, code generation, specific technical inference), catastrophically misused in others (creative labor displacement, mass content pollution), and built on infrastructure dynamics that are only now becoming visible to most observers. We're not in a moment of triumph or collapse—we're in the messy middle where capability and ethics haven't converged, and nobody's quite sure which will give way first.

The winners in this cycle won't be the startups stealing artists' work or flooding platforms with AI music. They'll be the infrastructure companies that figure out how to run these models cheaply, the medical institutions that integrate AI safely into clinical workflows, and the creative tools that augment human capability rather than replacing it. Everything else is borrowing against a future reckoning that's coming faster than the market currently prices in.

All Stories This Period

- ‘This is fine’ creator says AI startup stole his art

- In Harvard study, AI offered more accurate diagnoses than emergency room doctors

- CSPNet Paper Walkthrough: Just Better, No Tradeoffs

- How the internet’s favorite squirrel dad made the hottest camera app of 2026

- Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill

- AI music is flooding streaming services — but who wants it?

- Cloudflare Builds High-Performance Infrastructure for Running LLMs