The Infrastructure Spiral Gets Real

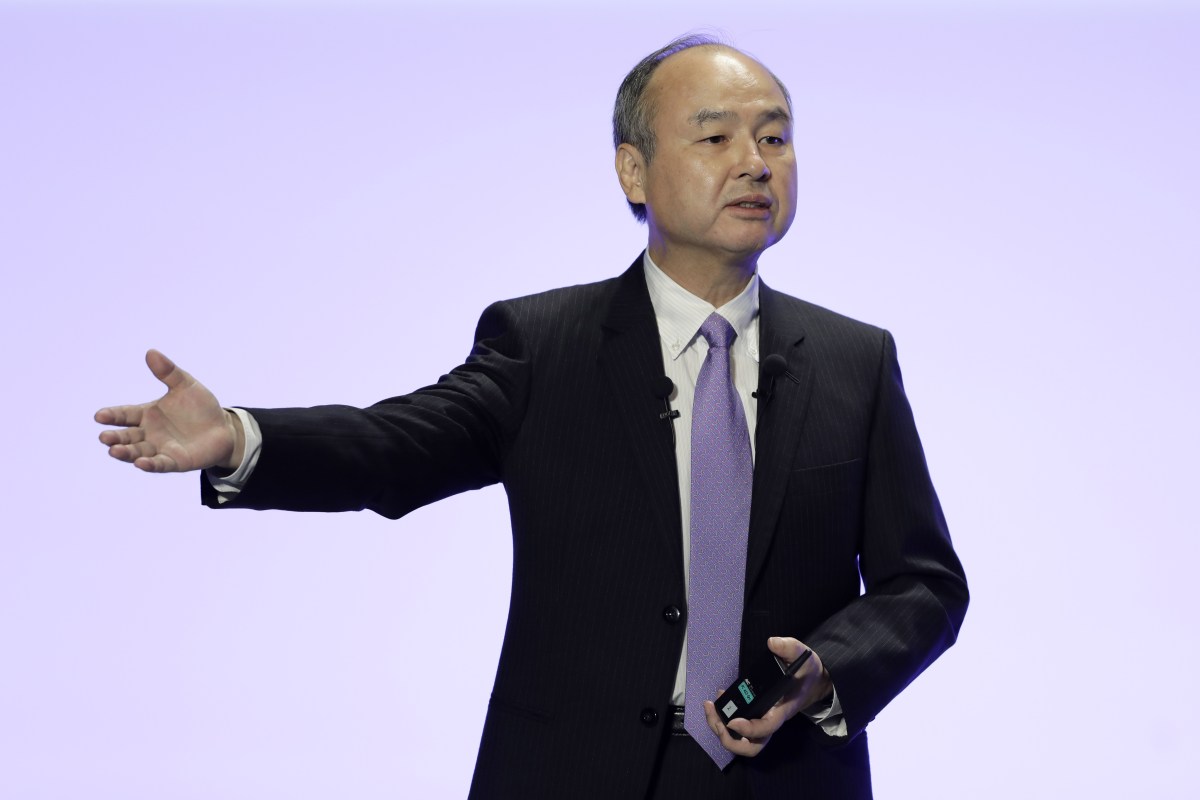

SoftBank's announcement that it's building a robotics company to construct data centers—with a $100B IPO target—isn't whimsy. It's a recognition that traditional infrastructure can't scale fast enough to meet AI's ravenous appetite. This is the logical endpoint of a cycle: you need data centers to train models, but you also need AI and robots to build data centers faster and cheaper than humans can. It's elegant, circular, and almost certainly the future of capital-intensive infrastructure deployment.

The numbers support the urgency. Google Cloud surpassed $20B in quarterly revenue but admits capacity constraints are limiting growth. AWS is spending heavily to expand. OpenAI is scaling Stargate to build compute infrastructure for "the Intelligence Age." These aren't abstract plans—they're massive capital commitments happening now. The infrastructure arms race has moved from nice-to-have to existential competitive advantage.

The Valuation Disconnect Widens

Anthropic is reportedly fielding $50B round offers at $850B–$900B valuations. Parallel Web Systems hit $2B valuation just five months after its last major raise. These numbers are increasingly divorced from user growth or even revenue trajectories. We're seeing pre-money valuations that assume not just success, but market dominance in spaces that don't yet exist at scale. This is venture capital writing checks against a future that may or may not materialize.

The reality check is starting to show cracks. ChatGPT's download growth is slowing, with users uninstalling or switching to competitors. Microsoft claims 20M paid Copilot users, but framing this as a win suggests the bar has moved considerably lower. These are not the growth curves that justify $900B valuations, yet the capital keeps flowing. The disconnect between unit economics and capital available is unsustainable, and the market knows it.

Musk v. Altman: Theater Masking Real Questions

Elon Musk's testimony in his attempt to dismantle OpenAI is playing out as absurdist theater, with Musk's own tweets becoming his worst enemy on the stand. The case itself is legally interesting but operationally irrelevant—it won't actually force OpenAI to change structure even if Musk wins on narrow grounds. What's genuinely interesting is that this trial exists at all, revealing deep fractures between founders about OpenAI's direction and commercial intent.

The real story isn't legal—it's ideological. Musk believed OpenAI should remain a nonprofit safety-focused org. The organization became a capped-profit venture backed by Microsoft. Whether courts validate Musk's contract interpretation hardly matters compared to the fact that the AI community's flagship "safety" organization is now structurally indistinguishable from a for-profit corporation. That's the unresolved tension worth watching.

Defense Gets Its AI Play, Society Braces

Scout AI just raised $100M to build AI agents for autonomous warfare, with explicit White House backing for "AI dominance." Meanwhile, drone factories are shipping in containers, and DHS is spending hundreds of millions on high-powered surveillance drones. The military-industrial complex has fully absorbed AI as force multiplier, and the policy environment is actively encouraging it. This isn't edge case dystopia—it's industrial strategy.

Parallel to this, Ubuntu users are asking for a "kill switch" on Canonical's AI features, and RightsCon—the world's largest digital human rights conference—was suddenly postponed by Zambia's government over "thematic issues." The pattern is clear: AI is becoming both a tool of state power and a flashpoint for rights advocates. The infrastructure being built in data centers will have military applications. The surveillance expanding on highways will watch citizens. The autonomy granted to systems will be harder to recall once it's operational.

Consumer AI Experiments Obscure Structural Problems

Google's AI try-on features for clothes, Google recreating Cher's closet from Clueless, Gemini coming to four million GM cars—these are the visible face of deployed AI. They're useful, sometimes clever, and completely peripheral to the actual infrastructure and capability battles happening at higher layers. Meanwhile, Taylor Swift deepfakes are fueling scams on TikTok, and families of shooting victims are suing OpenAI for not alerting police to ChatGPT activity. The consumer experience is expanding while accountability remains undefined.

The gap between AI's capabilities and society's frameworks for managing them is growing visibly. GitHub fixed a critical RCE vulnerability in six hours, but that's luck and internal competence, not systematic security. Apple fixed a Signal message extraction bug only after media pressure. These aren't isolated incidents—they're proof that AI infrastructure is deploying faster than governance can adapt. The features we see are distractions from the structural questions we're not asking.

The Evaluation Bottleneck and What It Means

Hugging Face flagged that AI evaluations are becoming the new compute bottleneck. This is a crucial inflection point: we've moved past model training as the constraint and into model assessment. You can't ship what you can't measure, and measuring AI systems at scale is becoming the rate-limiting step. This creates opportunities for evaluation infrastructure companies but also suggests the field is hitting the limits of brute-force scaling.

It also explains why we're seeing such aggressive infrastructure spending. If you can't evaluate models efficiently, you need different kinds of scale—broader data, more sophisticated architectures, more diverse testing regimes. The companies that figure out evaluation at scale won't just win on capability; they'll own the ability to certify what's safe enough to deploy. In a world of massive capital expenditure and regulatory uncertainty, that's the real moat.

All Stories This Period

- SoftBank is creating a robotics company that builds data centers — and already eyeing a $100B IPO

- The Terrarium

- Amazon’s cloud business is surging — and so is its capital spending

- Sources: Anthropic could raise a new $50B round at a valuation of $900B

- Elon Musk’s worst enemy in court is Elon Musk

- On the stand, Elon Musk can’t escape his own tweets

- Meta is still burning money on AR/VR

- Satya Nadella says he’s ready to ‘exploit’ the new OpenAI deal

- Microsoft says it has over 20M paid Copilot users, and they really are using it

- Google Cloud surpasses $20B, but says growth was capacity-constrained

- Glean's Model Aims to Redefine Enterprise Search With AI

- Extracting contract insights with PwC’s AI-driven annotation on AWS

- Google gains 25M subscriptions in Q1, driven by YouTube and Google One

- Google Search queries hit an ‘all time high’ last quarter

- Where the goblins came from

- Organizing Agents’ memory at scale: Namespace design patterns in AgentCore Memory

- Is AI video just a prequel? Runway’s CEO thinks world models are next

- Parallel Web Systems hits $2B valuation five months after its last big raise

- AWS Launches Managed Agents with OpenAI Partnership

- All the evidence unveiled so far in Musk v. Altman

- Ubuntu’s AI plans have Linux users looking for a ‘kill switch’

- Crack ML Interviews with Confidence: K-Nearest Neighbors (KNN 20 Q&A)

- World’s Largest Digital Human Rights Conference Suddenly 'Postponed'

- AI evals are becoming the new compute bottleneck

- Google Photos uses AI to make the iconic closet from ‘Clueless’ a reality

- More Gemini features are coming to Google TV

- 4 YAML Files Instead of PySpark: How We Let Analysts Build Data Pipelines Without Engineers

- Scout AI Raises $100M to Build ‘AI Brain' for Autonomous Warfare

- Google Photos launches an AI try-on feature for clothes you already have

- Compressing LSTM Models for Retail Edge Deployment: A Practical Comparison