Some things I noticed while LARPing as a grantmaker

Written to a new grantmaker. The first three points are the most important. Focus on opportunities many times your bar. Most value comes from finding/creating projects many times your bar,…

Written to a new grantmaker. The first three points are the most important. Focus on opportunities many times your bar. Most value comes from finding/creating projects many times your bar,…

This policy toolkit is primarily geared toward stopping, slowing, and restricting rampant data center development in the US at the local and state level. Our approach recognizes the extractive relationship…

! 30s Heartbeat trigger. Read heartbeat instructions in /mnt/mission/HEARTBEAT.md and continue. .oO Thinking... Heartbeat triggered? Ok. Ok. Why am I nervous? Don't be nervous. → Ok. Let me access that…

In this post, I’m trying to put forward a narrow, pedagogical point, one that comes up mainly when I’m arguing in favor of LLMs having limitations that human learning does…

Initial signatories include AI pioneers Yoshua Bengio and Geoffrey Hinton, leading media voices Steve Bannon and Glenn Beck, Obama's National Security Advisor Susan Rice, business trailblazers Steve Wozniak and Richard…

A 2022 LessWrong post on orexin and the quest for more waking hours argues that orexin agonists could safely reduce human sleep needs, pointing to short-sleeper gene mutations that increase…

The White House published it’s long-awaited AI legislative recommendations on Friday, and it still includes a call for Congress to […]

In the lead-up to this year’s India AI Impact Summit, we attempted to pre-bunk a new kind of AI hype that was circulating. We observed that the “right” words were…

“It’s very dangerous that ‘speed’ is somehow being sold to us as strategic here, when it’s really a cover for indiscriminate targeting when you consider how inaccurate these models are,”…

The collaboration will produce a Crisis Counselor Training Curriculum and a statewide AI Harms Reporting Form targeting dangerous AI companion applications

“In terms of safety guardrails for ‘high-stake decisions’ or surveillance, the existing guardrails for generative AI are deeply lacking, and it has been shown how easily compromised they are, intentionally…

Amid a rising backlash to Silicon Valley overreach, a remarkably diverse group from across the political spectrum announced a set of AI principles to clearly define the goals of the…

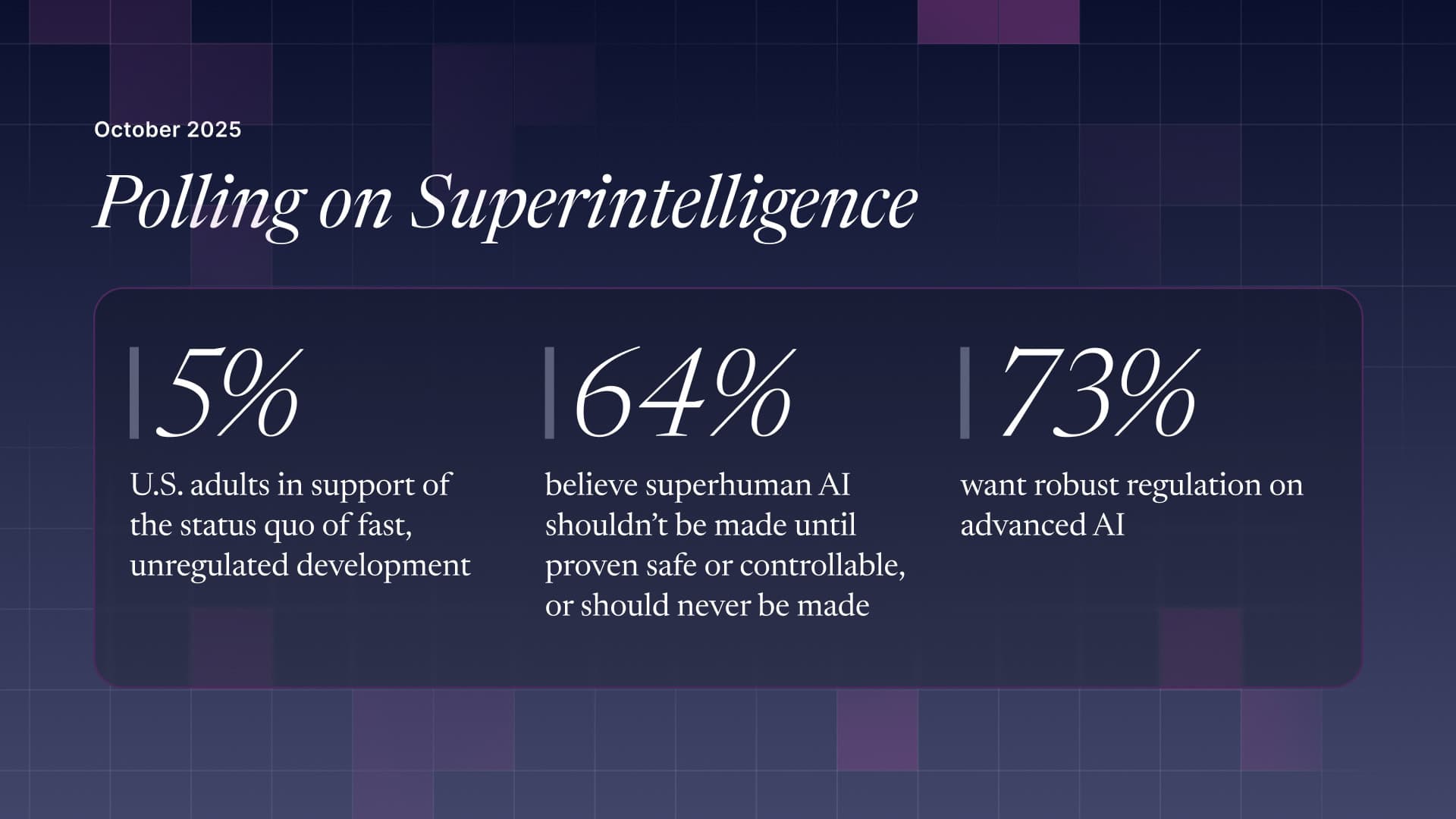

"Our safety and basic rights must not be at the mercy of a company's internal policy; lawmakers must work to codify these overwhelmingly popular red lines into law."

The Protect What’s Human campaign will push for commonsense AI safety rules at federal and state level

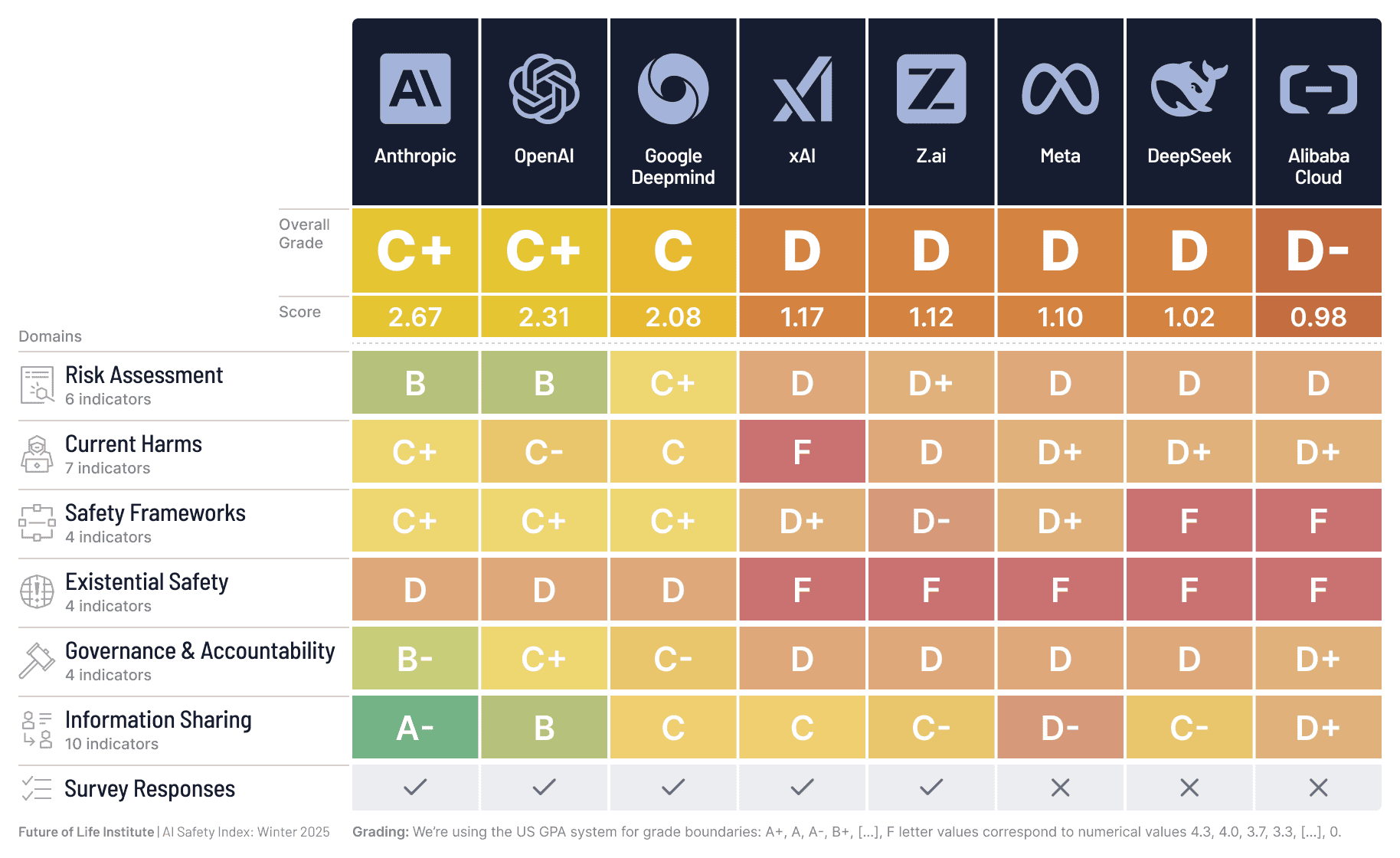

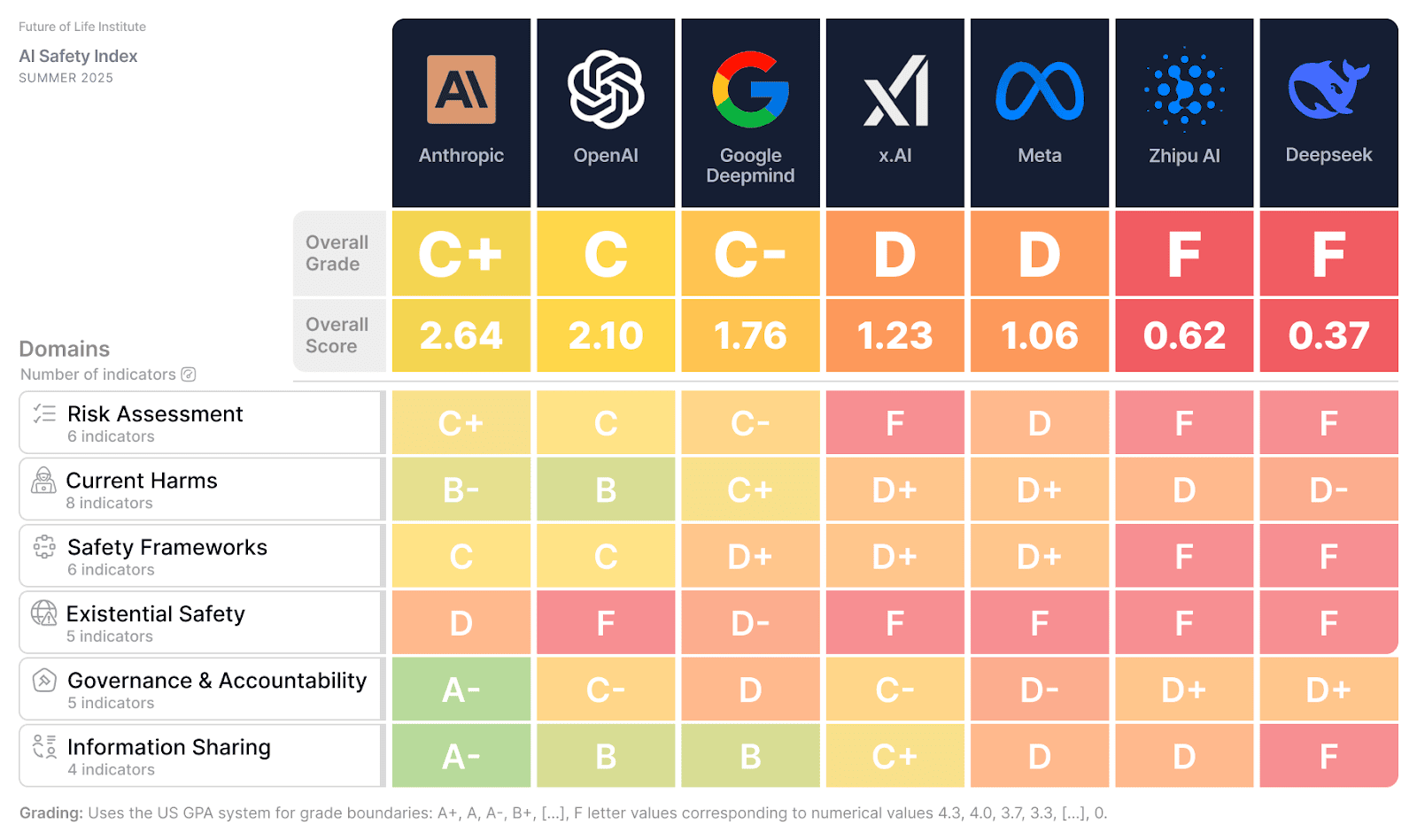

But in a win for transparency, five leading companies participated in the scorecard's survey for the first time, providing critical new information to the public.

Three‑quarters of U.S. adults want strong regulations on AI development, preferring oversight akin to pharmaceuticals rather than industry "self‑regulation."

FLI celebrates a landmark moment for the AI safety movement and highlights its growing momentum

The Future of Life Institute’s 2025 summer update to its AI Safety Index shows some companies making incremental progress, but dangerous gaps remain in key categories such as risk assessment…

AIs are inching ever-closer to a critical threshold. Beyond this threshold lie great risks—but crossing it is not inevitable.

What must be considered to build a safe but effective future for AI in education, and for children to be safe online?

The AI Action Summit will take place in Paris from 10-11 February 2025. Here we list the agenda and key deliverables.

The AI-facilitated intelligence revolution is claimed by some to be setting humanity on a glidepath into utopian futures of nearly effortless satisfaction and frictionless choice. We should beware.

New research validates age-old concerns about the difficulty of constraining powerful AI systems.

The fourth of our 'AI Safety Breakfasts' event series, featuring Dr. Rumman Chowdhury on algorithmic auditing, "right to repair" AI systems, and the AI Safety and Action Summits.